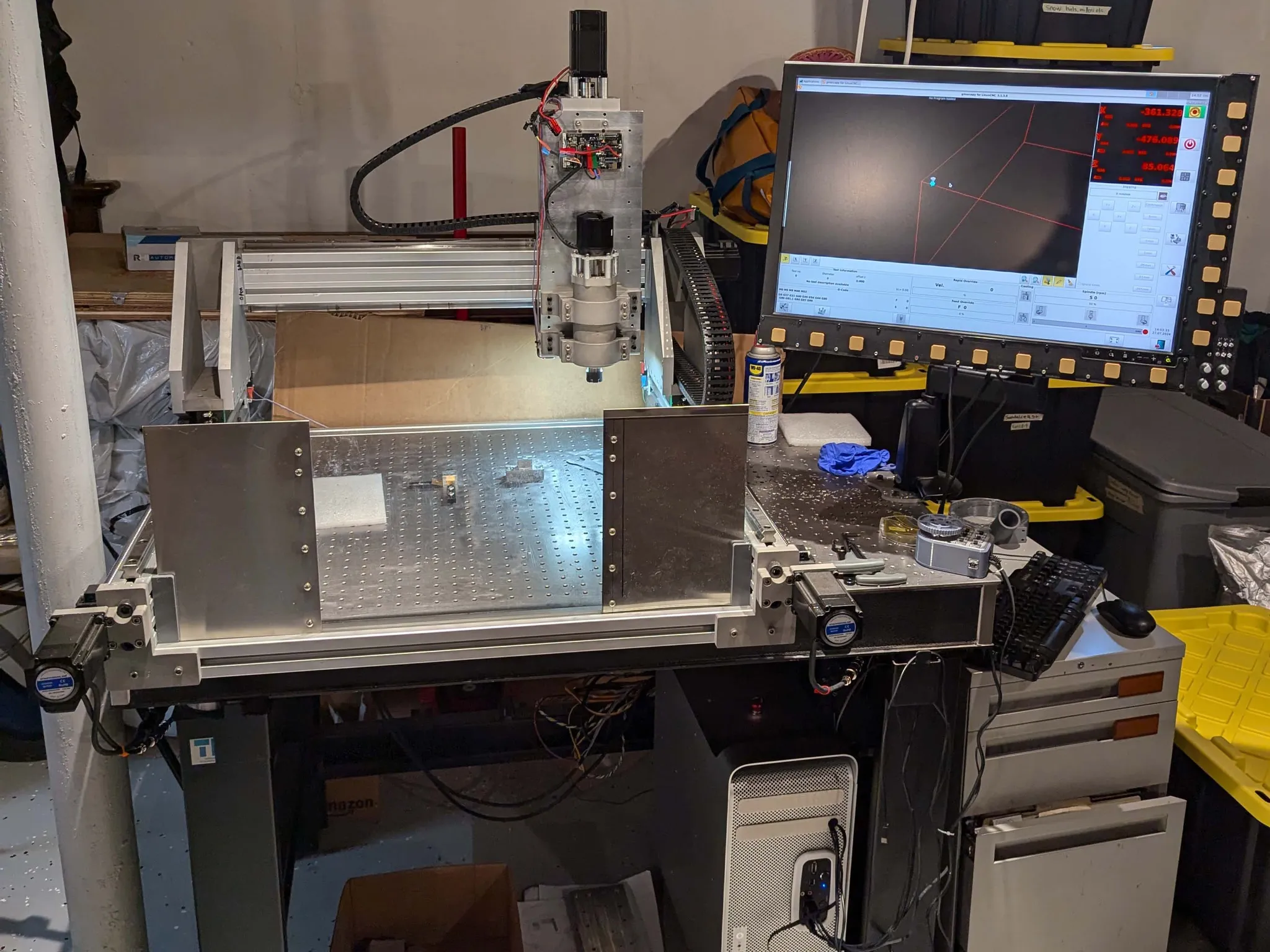

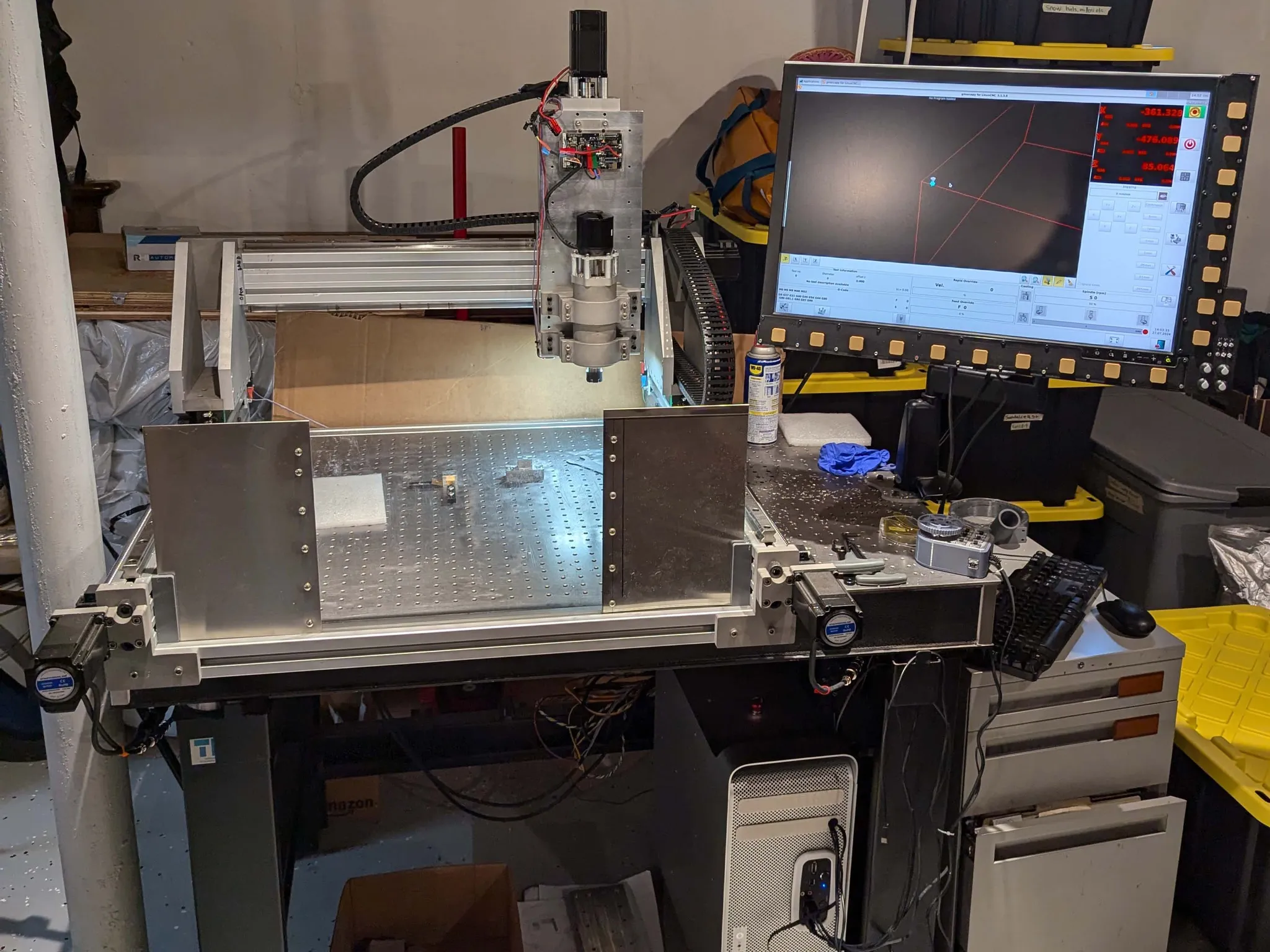

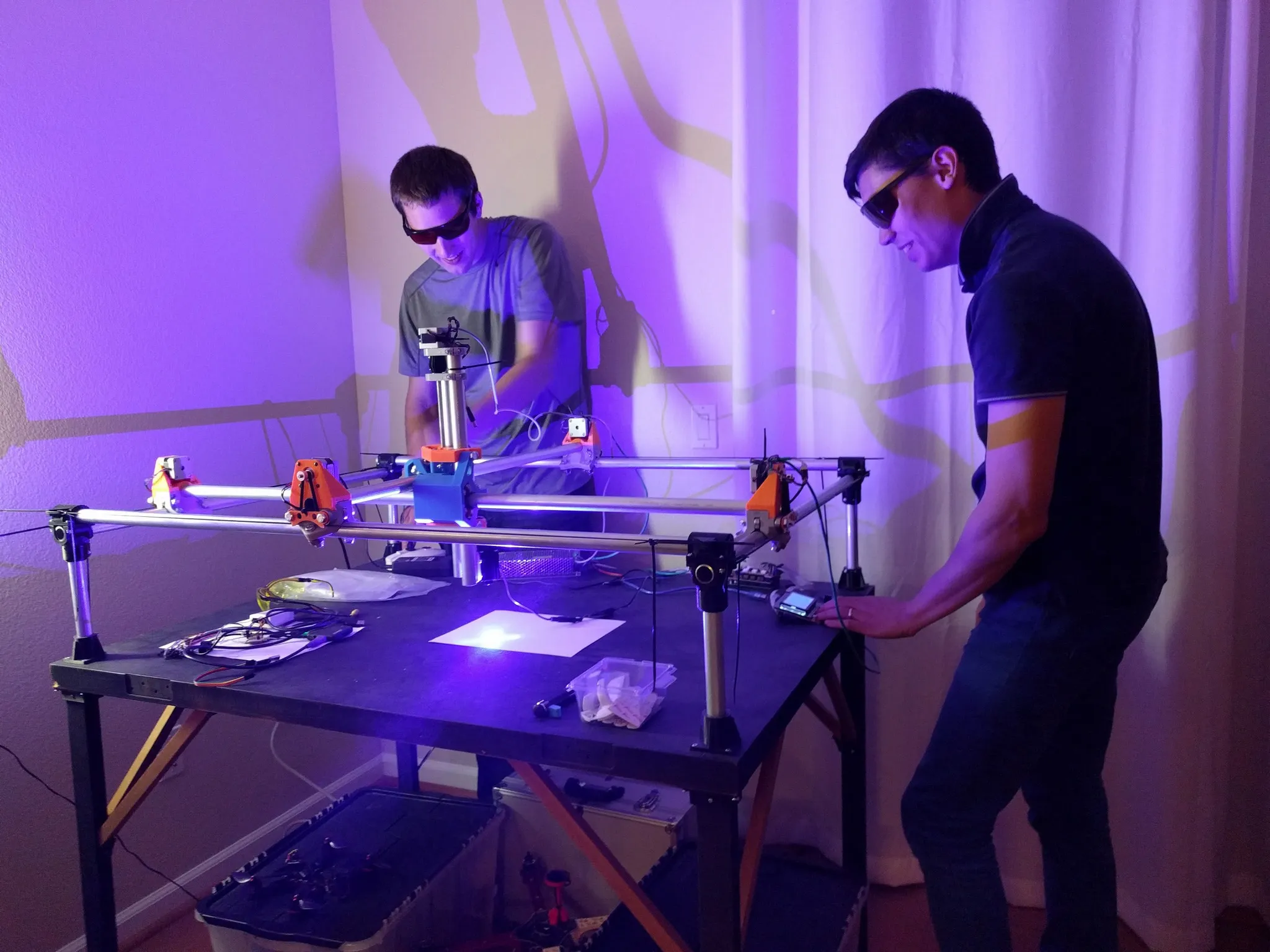

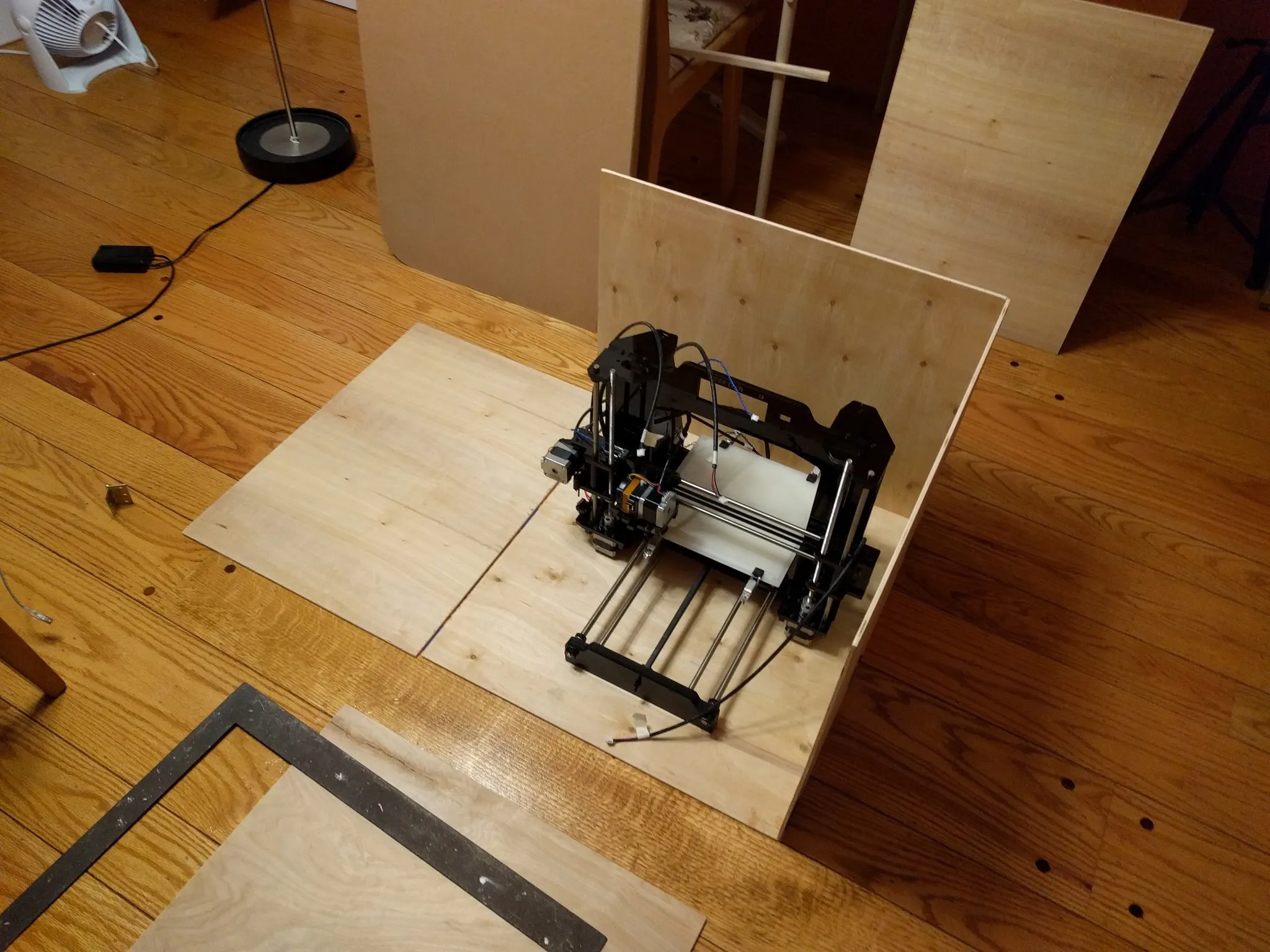

Rigid Router DIY CNC

An open-source design and build-guide for a rigid router CNC machine for milling aluminum and other materials.

An open-source design and build-guide for a rigid router CNC machine for milling aluminum and other materials.

How does drying your 3D printer filament improve print quality?

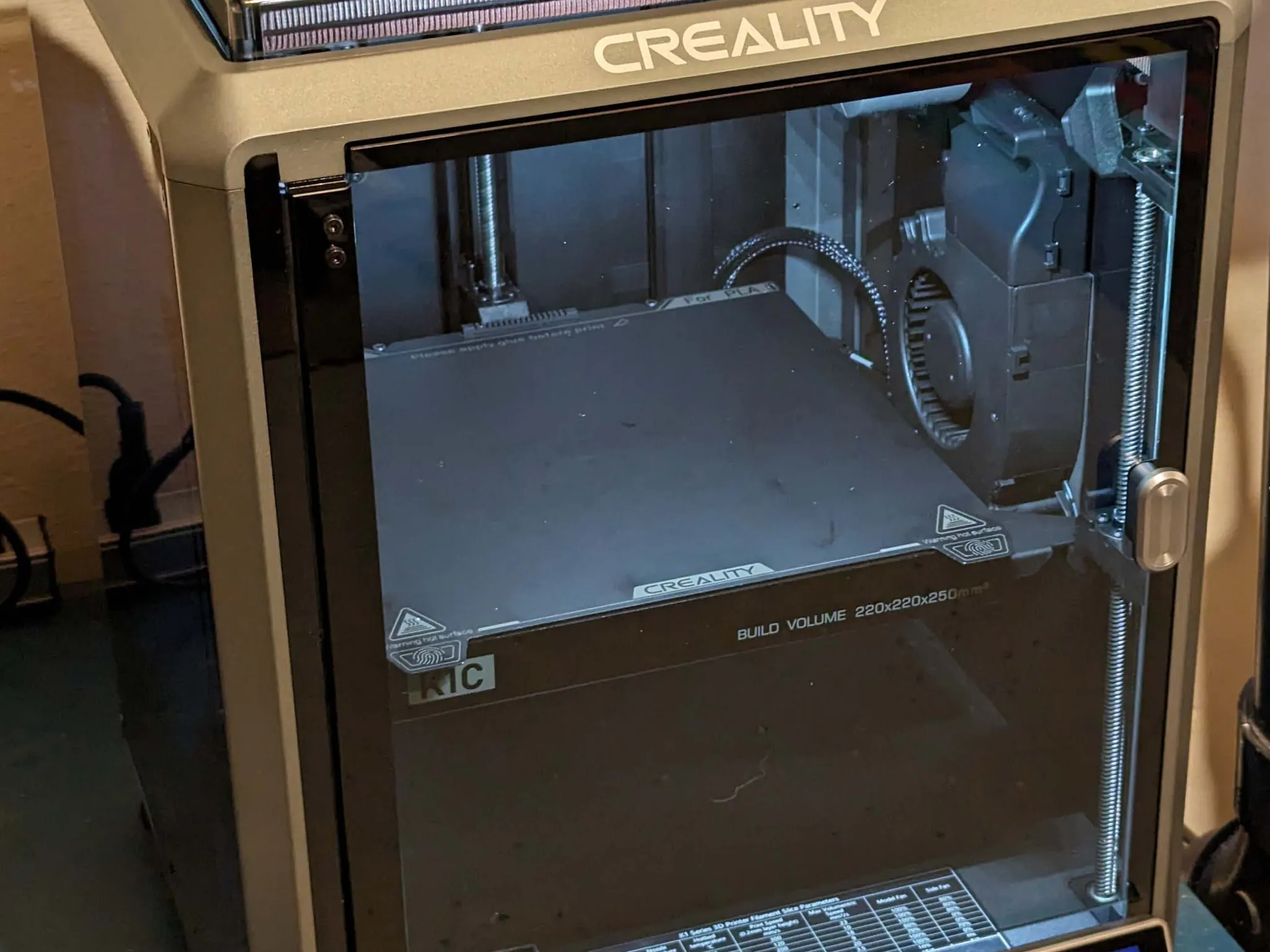

An in-depth review and long-term assessment of the Creality K1C, an enclosed CoreXY 3D printer optimized for high-speed performance and technical materials.

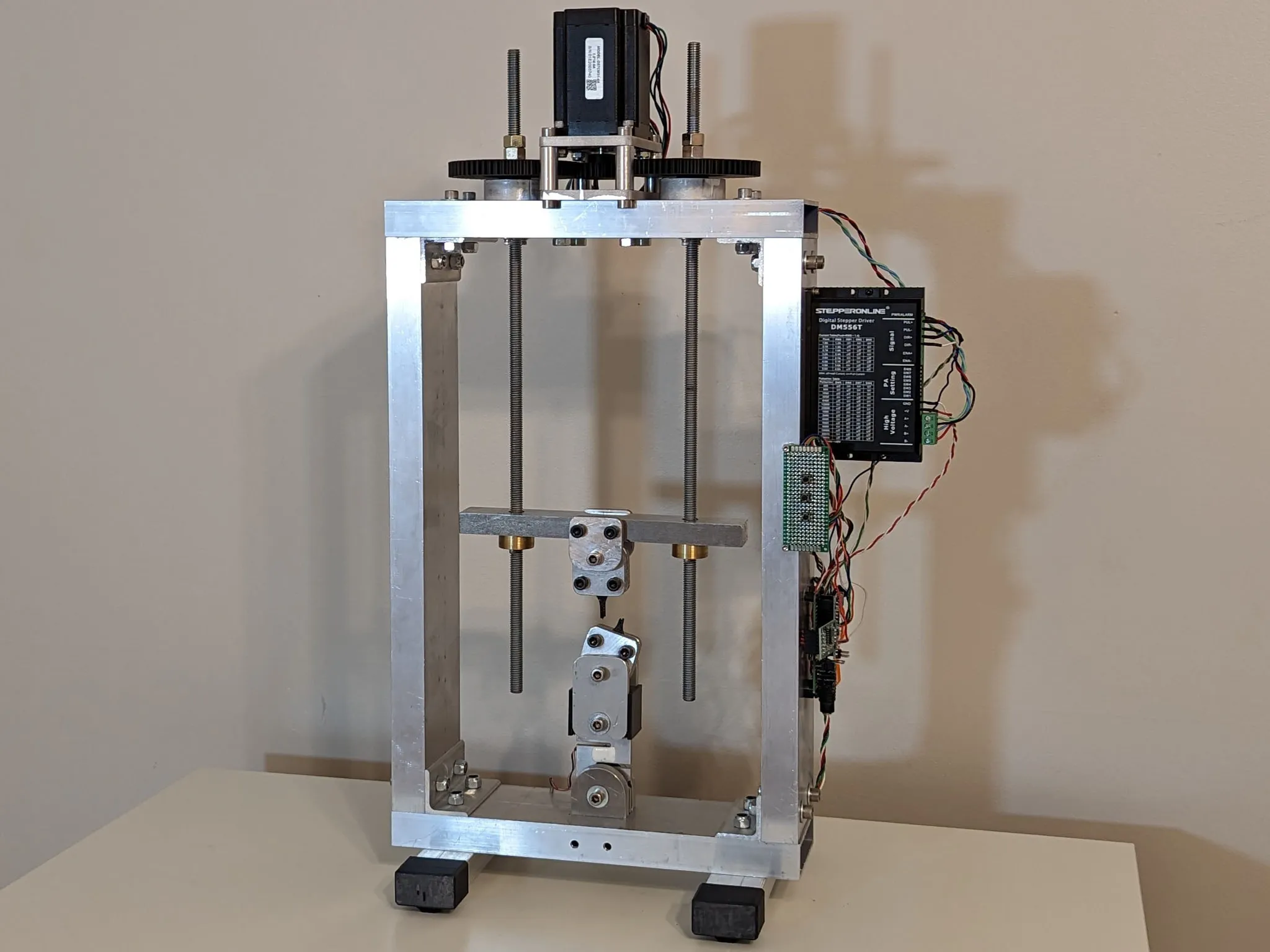

Tensile strength testing procedure and results for a range of 3D printer filaments.

This article covers the ballscrew and spindle upgrade to my G0704 Mill.

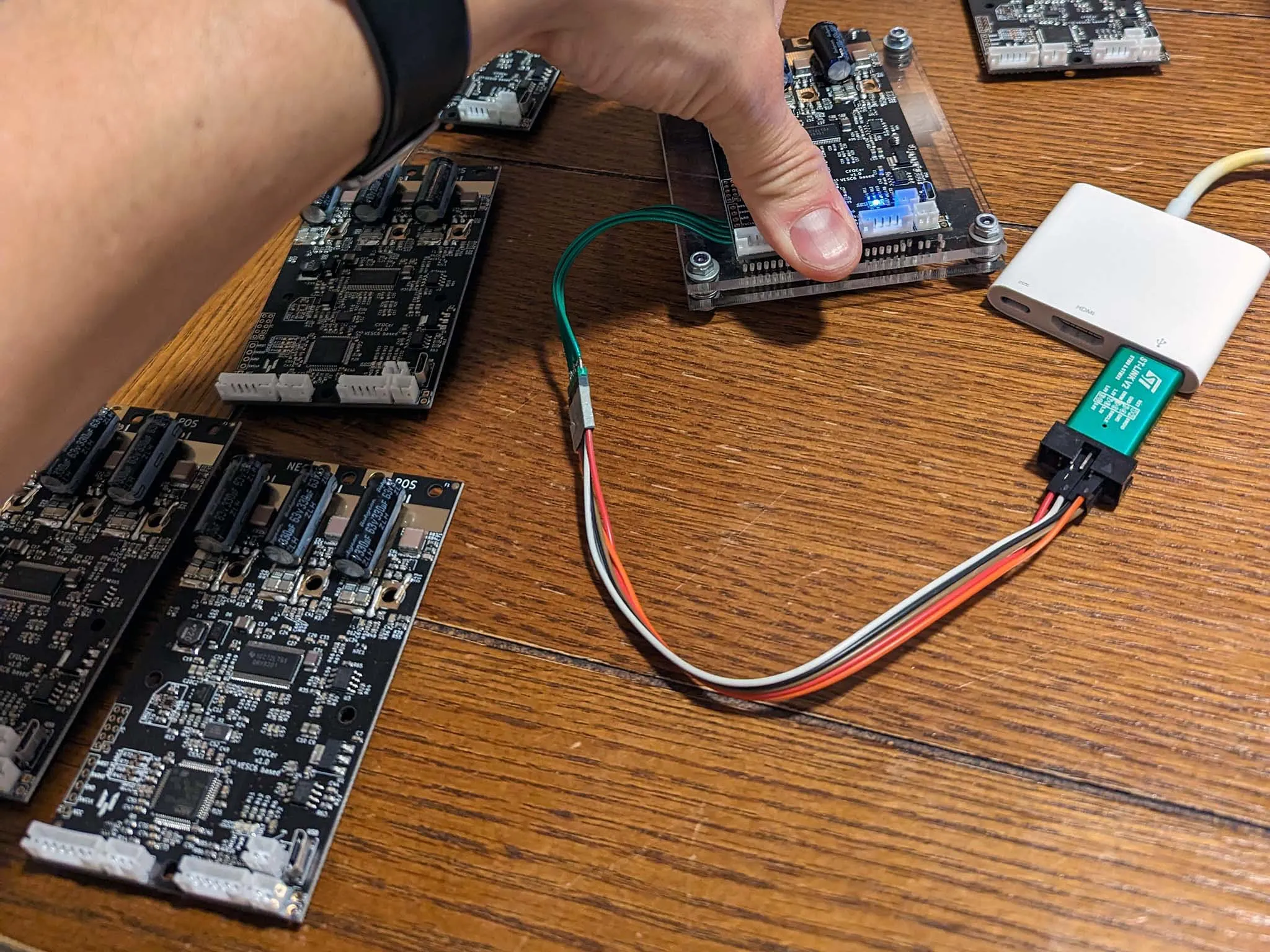

CFOCer VESC V6 Brushless Motor Controller Flashing and Setup Guide.

This article walks through the conversion of a g0704 mini mill to a CNC machine using LinuxCNC. I avoid the cost of a ballscrew conversion and instead use software backlash compensation with the stock ballscrews and am still able to achieve great results.

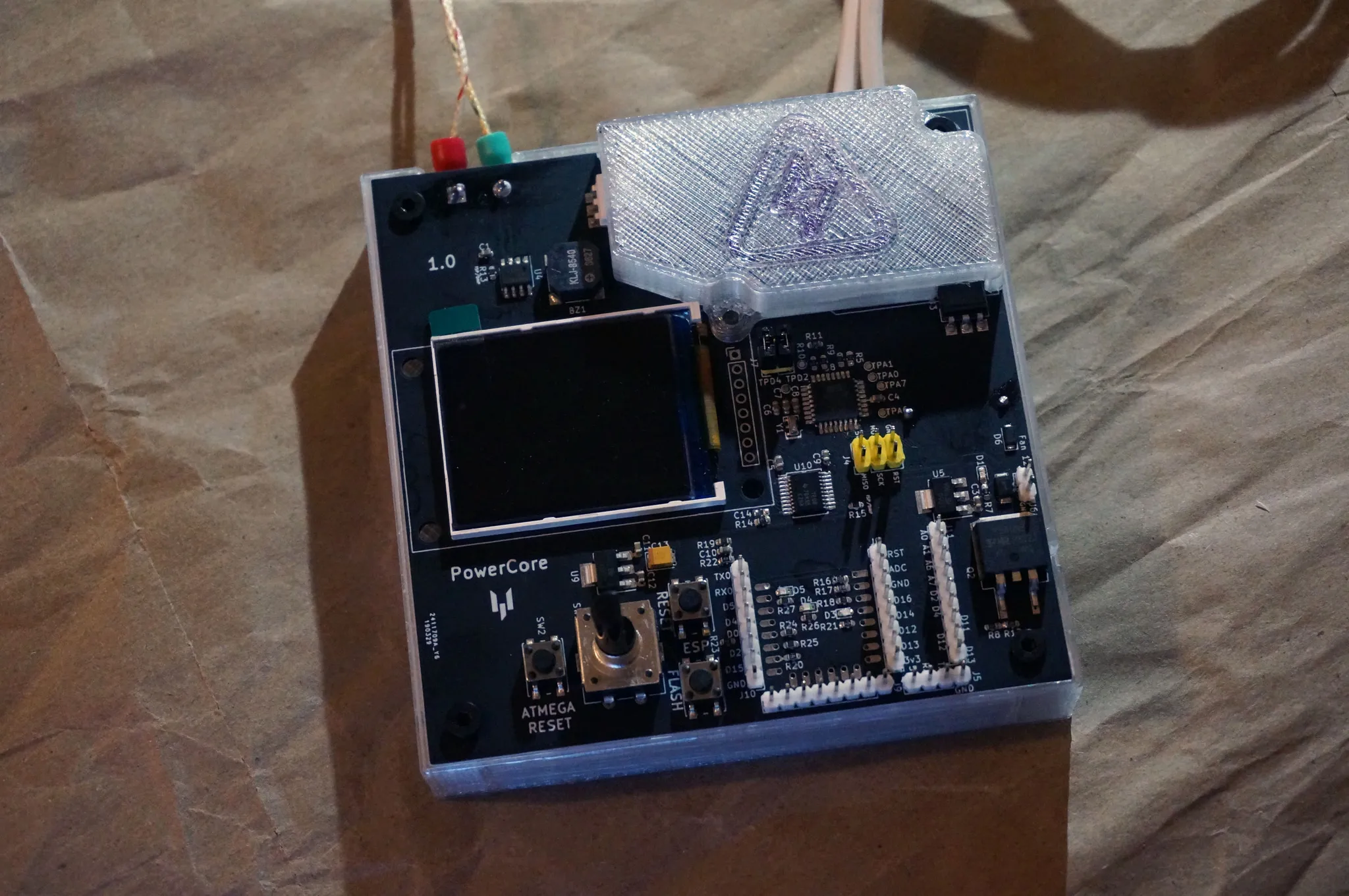

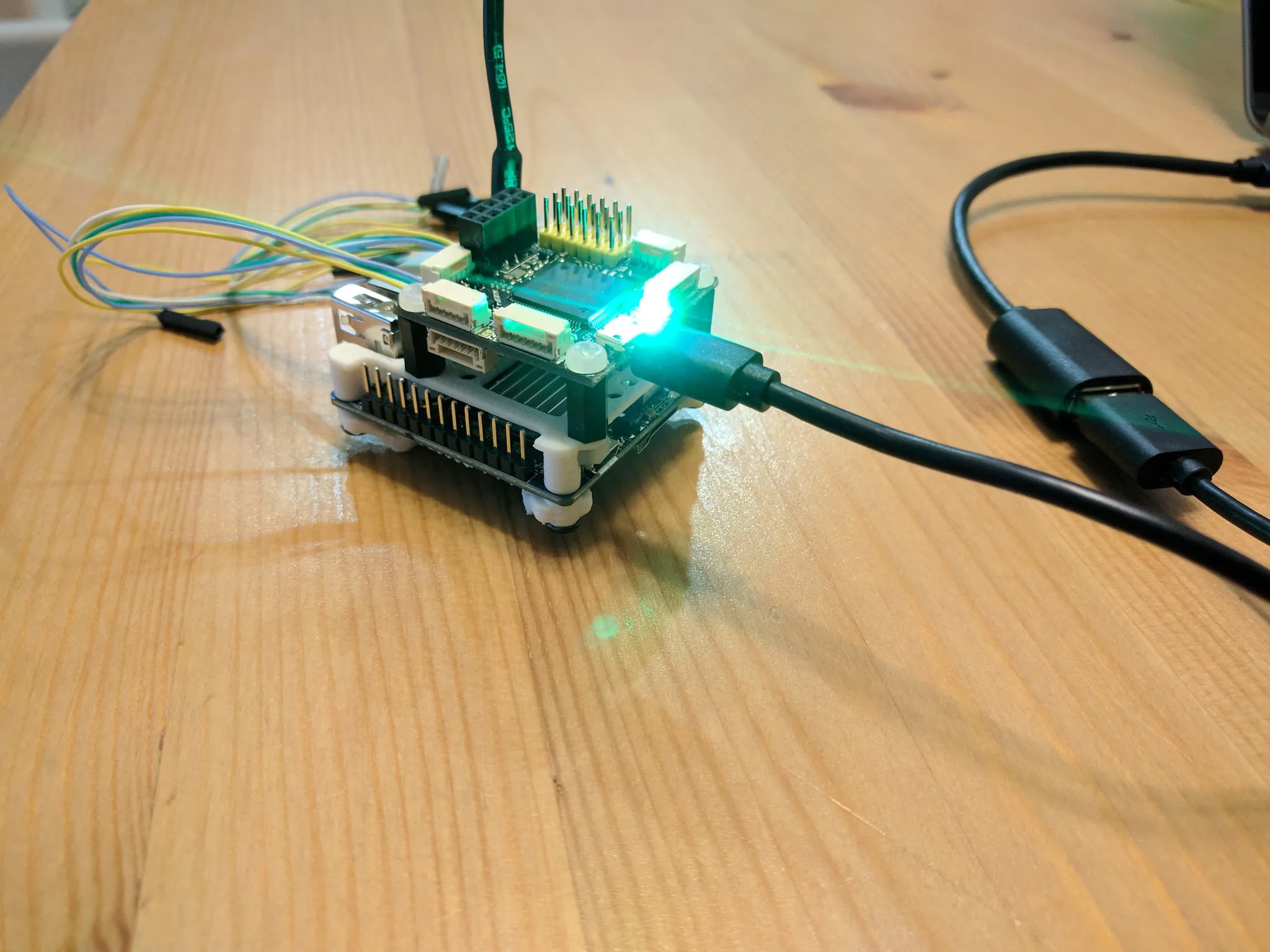

This post contains an overview and build guide for the FluxLamp soldering reflow oven. Built with a Vertile PowerCore, the FluxLamp is designed to be inexpensive, easy to make and easy to use. I hope this project will enable makers and hackers to start doing their own reflow soldering!

Tired of playing with safe, low voltage, dev boards? Want complete control over your AC powered device. How about WiFi programmability, remote logging, integrated temperature sensing, an LCD and more!? The PowerCore is what you need! This blog post covers the features of the PowerCore and walks through the story of why and how it was developed.

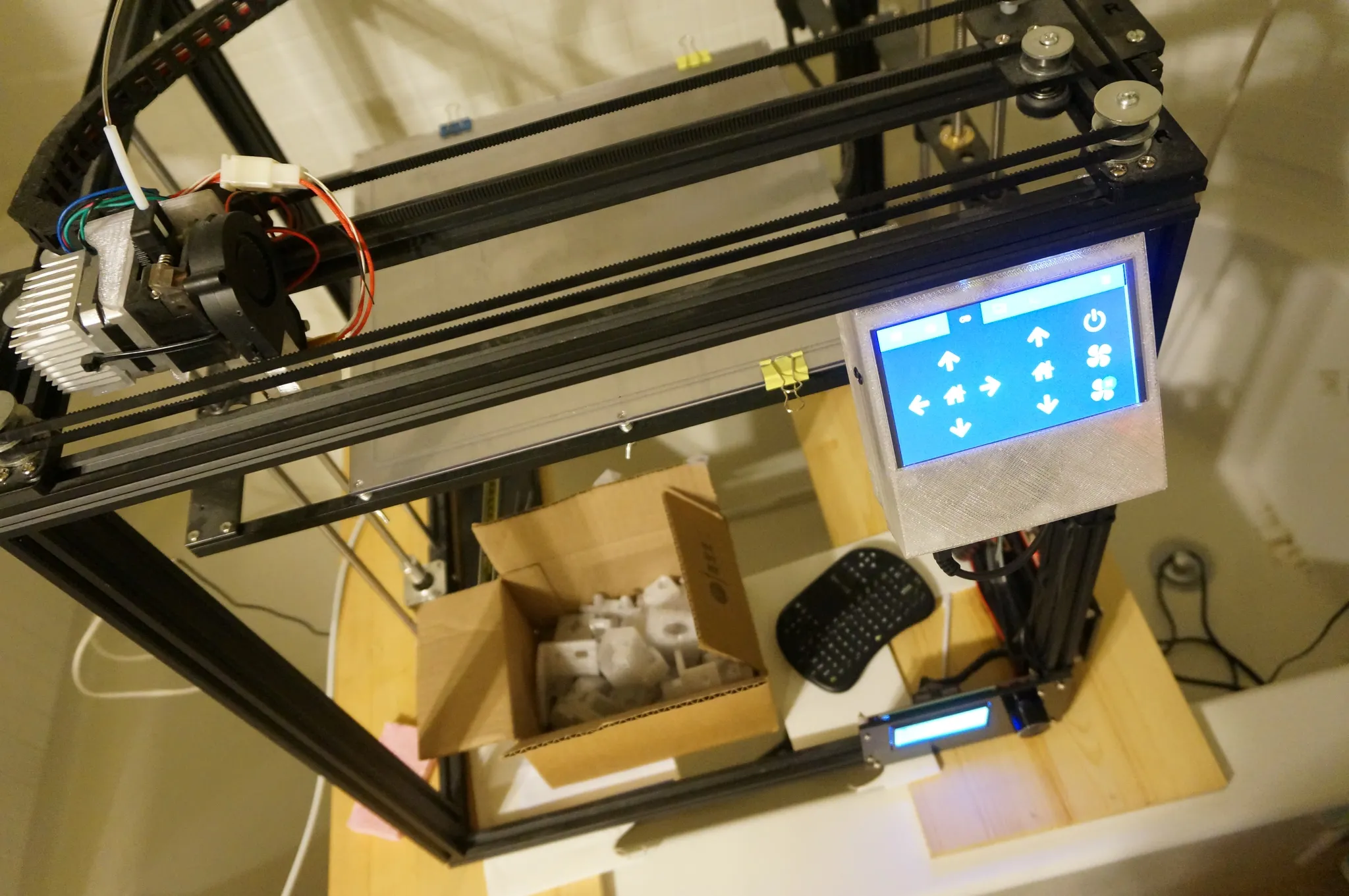

I have been exploring different firmware options for my TronXY X5S 3D Printer, and Klipper is interesting in that it runs the CNC control on a single board computer (SBC) and uses the 3D printer's control board as a motor controller. I've also added a touchscreen display to the SBC running Klipper for easy printer control.

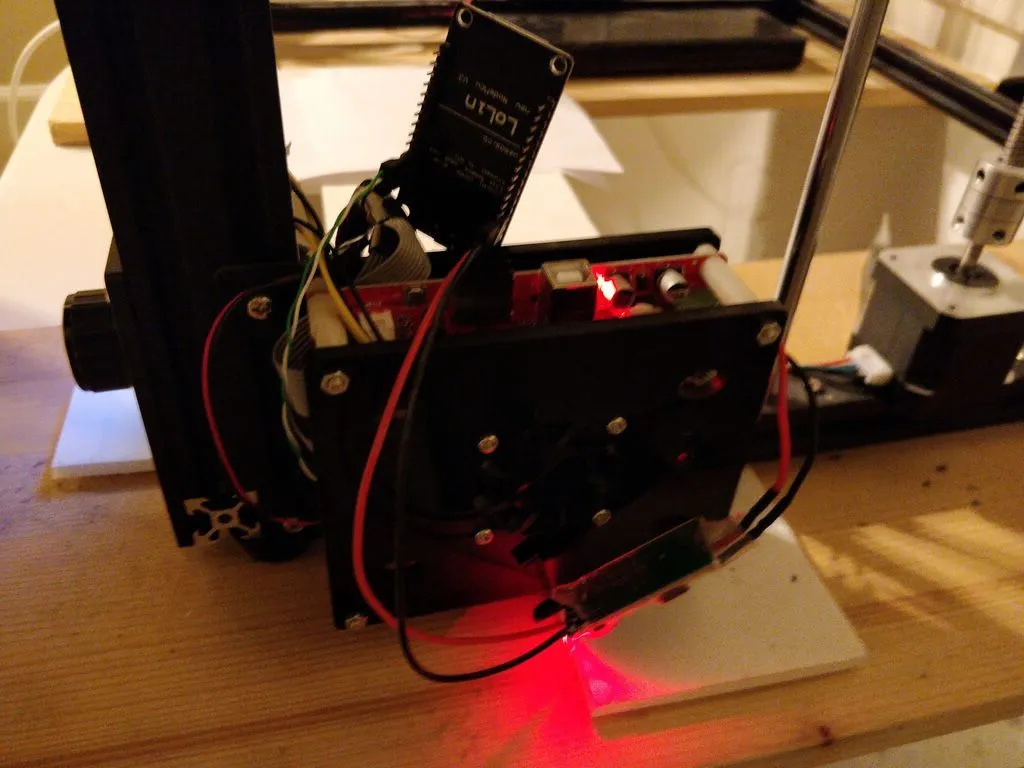

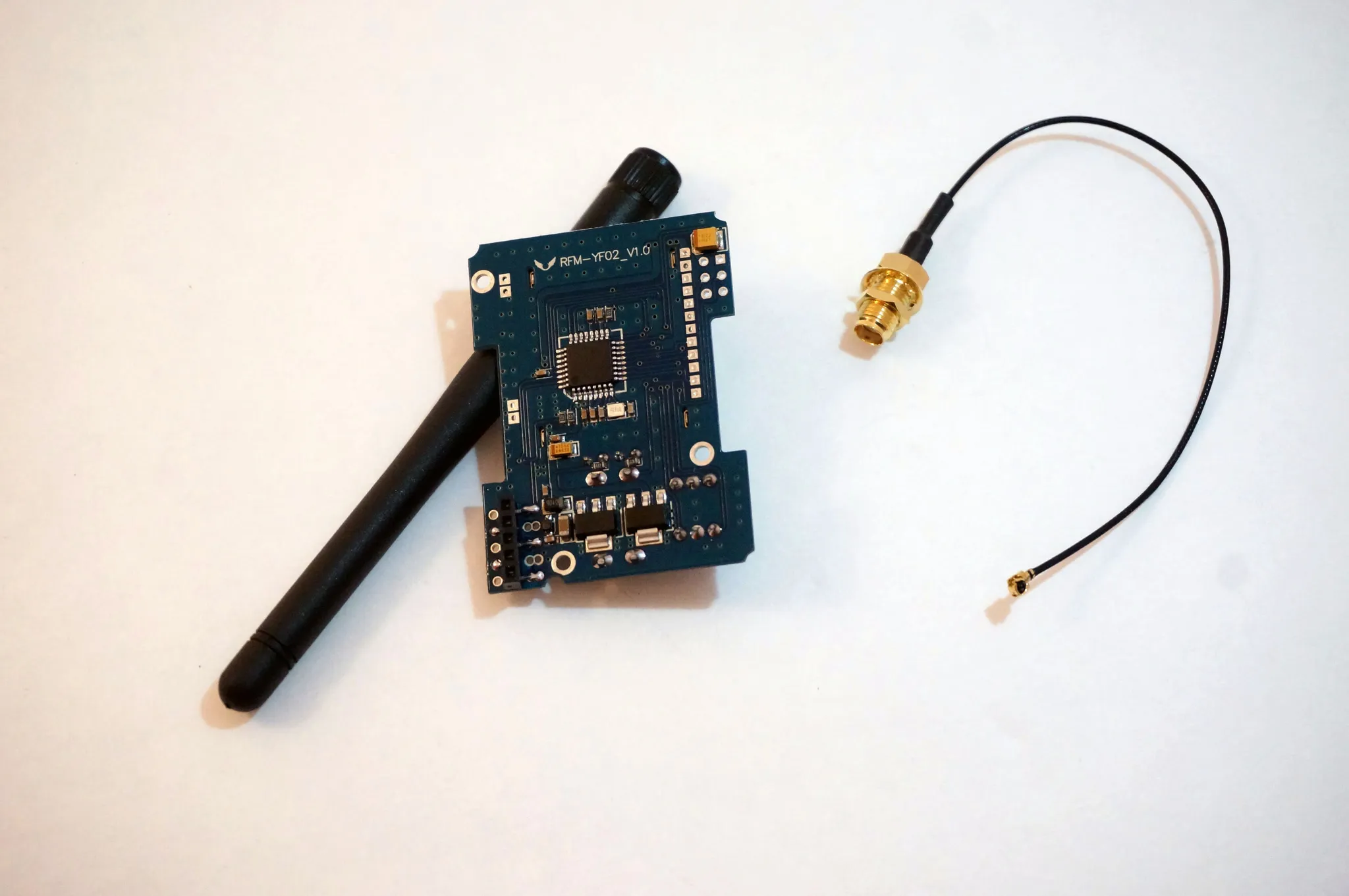

Let's add WiFi to the TronXY X5S 3D Printer (or any printer for that matter) for only a few dollars!

This post follows from a project at the Stanford Vision and Learning Lab, where I've been working for some time, and serves as documentation for our social navigation robot's onboard multi-target person tracker, which I developed under the guidance of Roberto, Marynel, Hamid, Patrick, and Silvio.

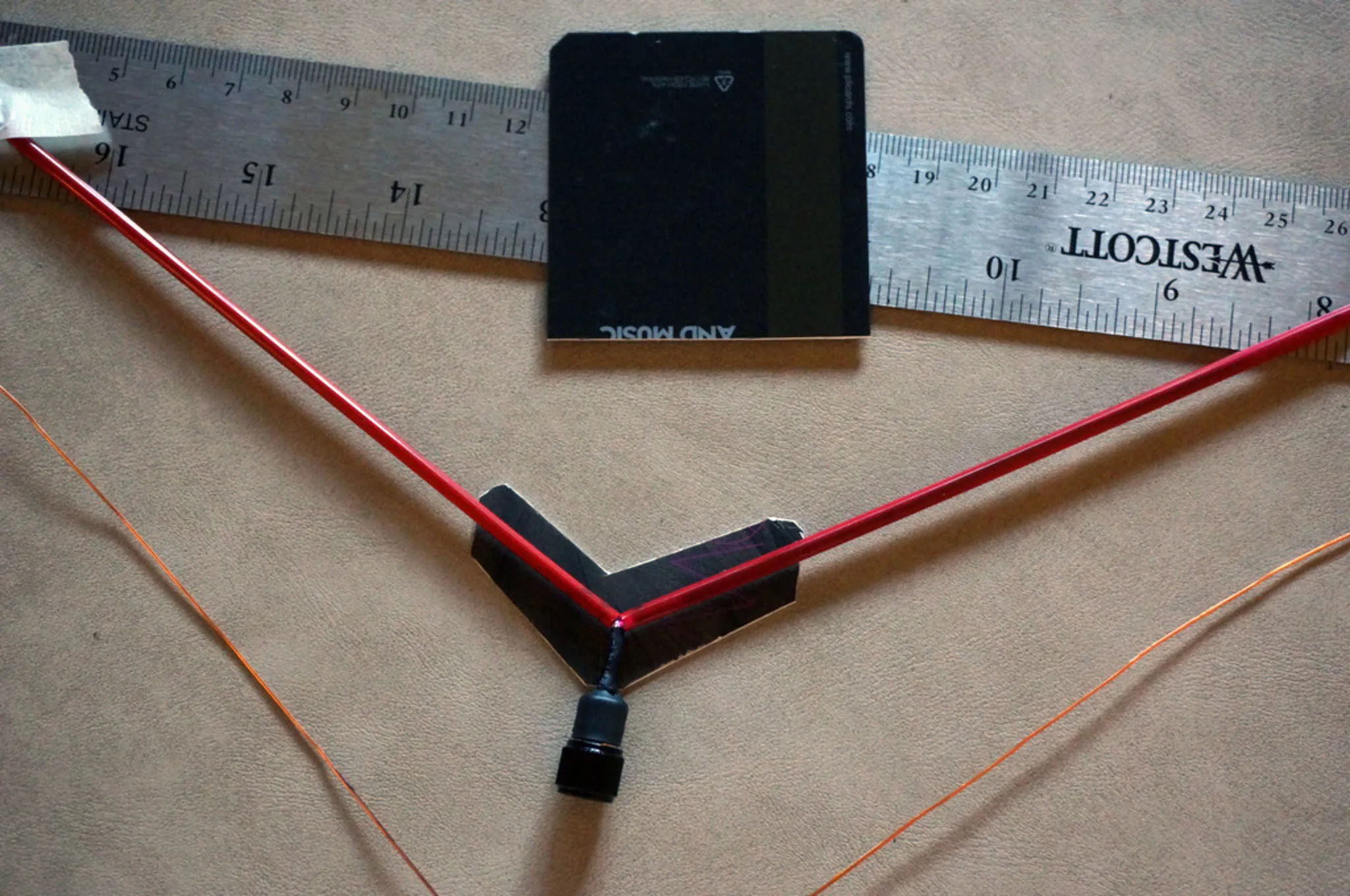

Here's a quick guide to build your own Inverted Vee antenna.

This is a build guide and review for the large format TronXY X5S 3D printer.

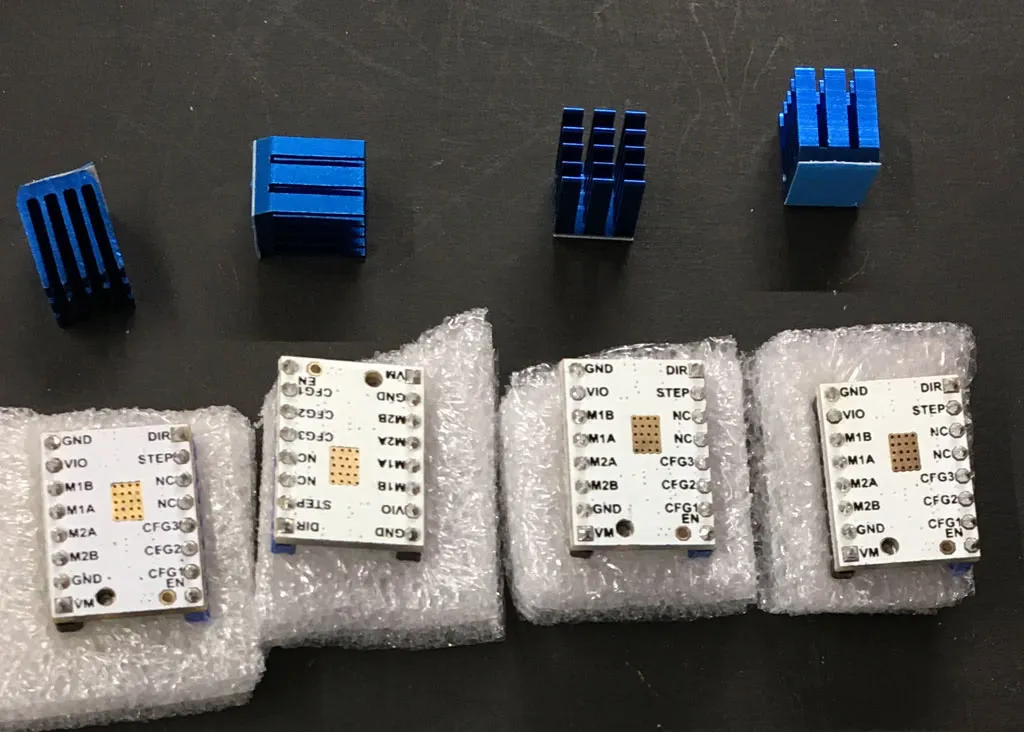

Located in a closet sharing a wall with a bedroom, my 3D printer's original A4988 drivers produced noticeable buzzing and ringing during quiet periods. To achieve near-silent operation, I upgraded to TMC2100 stepper motor drivers. Despite a higher cost, these drivers offer exceptional performance. This guide covers the installation and configuration of these drivers.

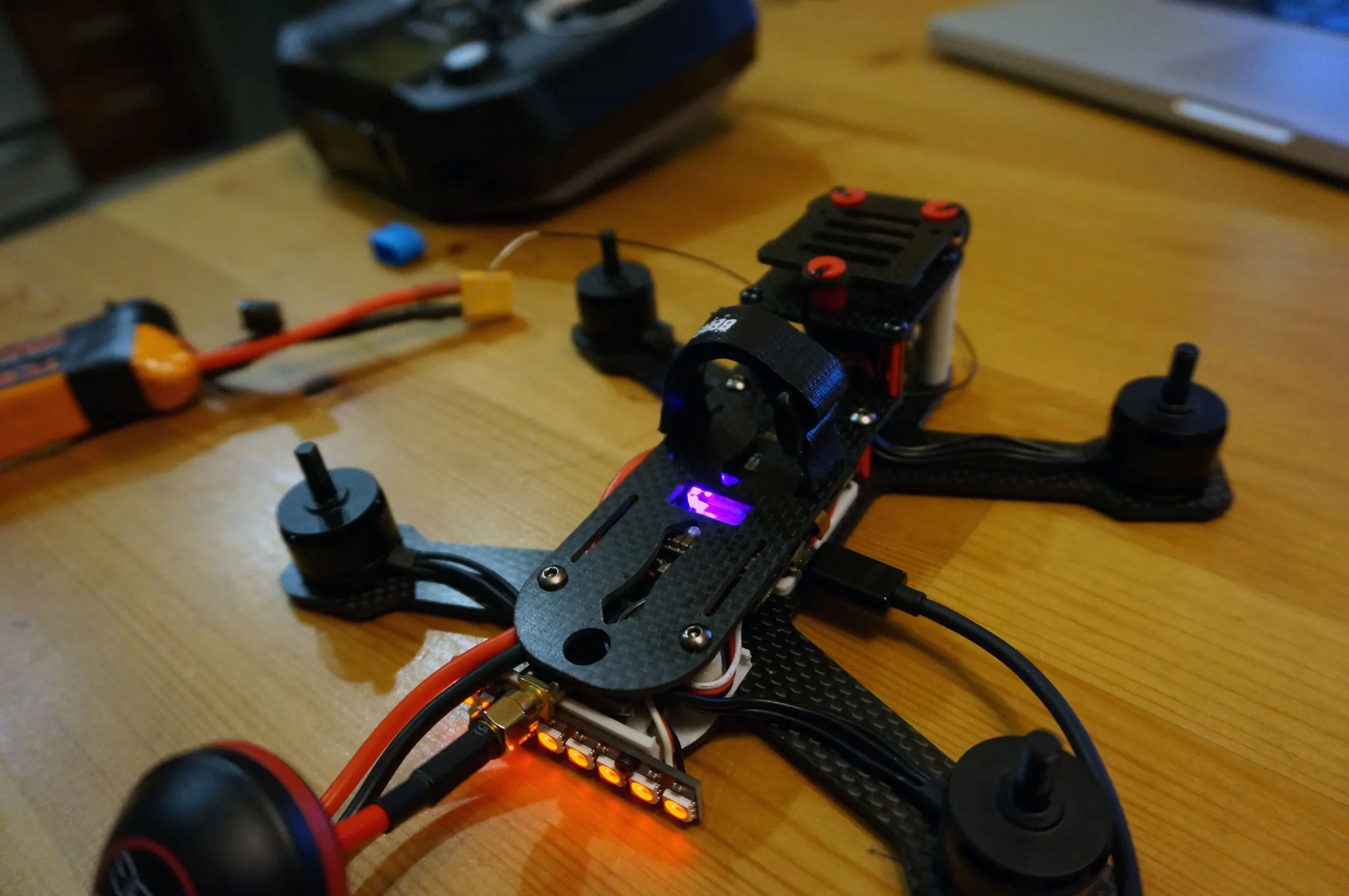

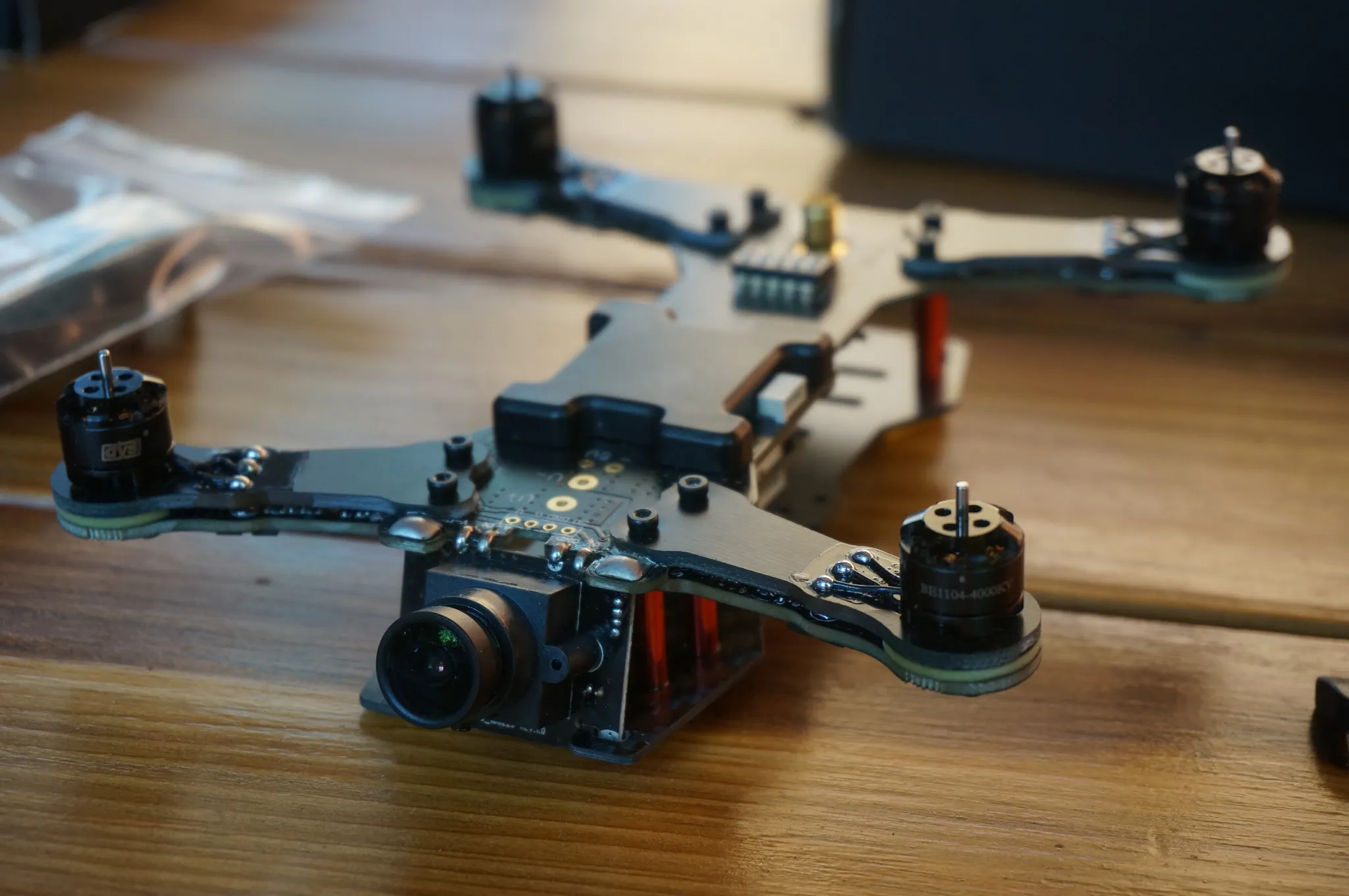

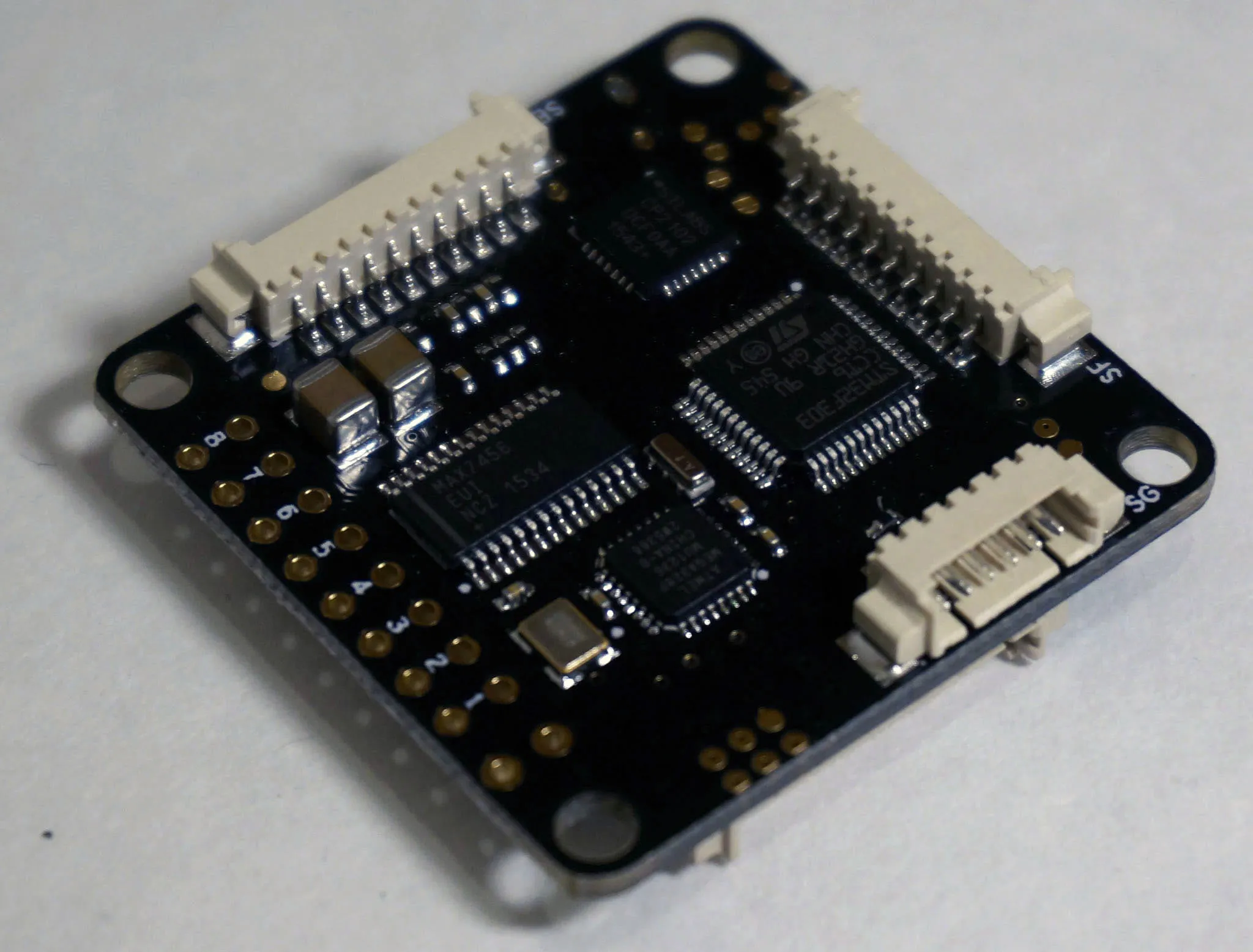

This is a build guide for a drone based on the NOX flight controller, a full-featured F4 flight controller with integrated OSD and 4x BLHeli 32 ESCs, DSHOT, and telemetry.

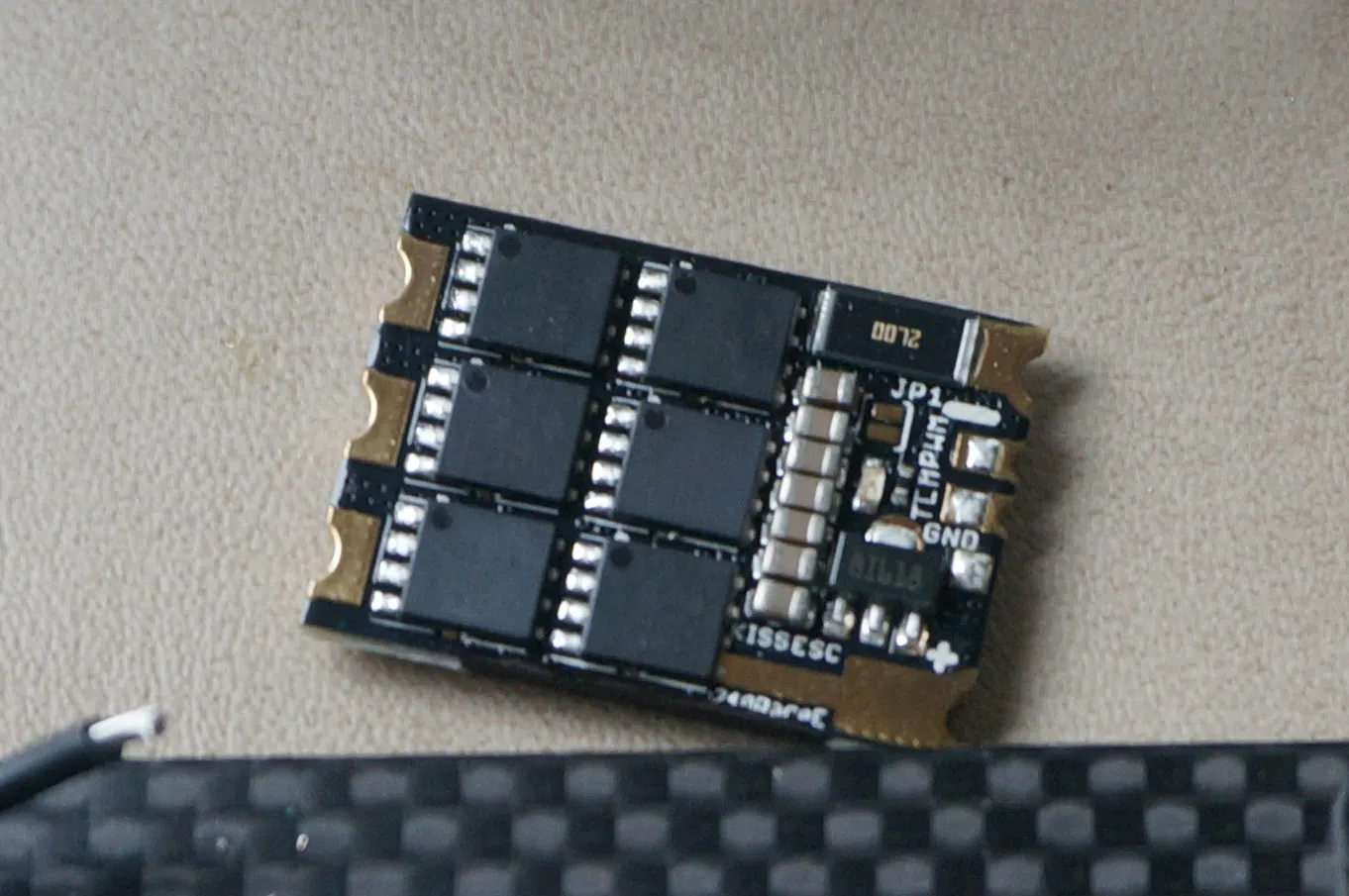

This guide assumes you have a flight controller running BetaFlight connected to KISS 24A ESCs. Alternatively, you can use a USB-UART converter to program the ESCs.

I would like to run Jupyter on a server with some other apps running on an Nginx server. This guide covers the configuration of the Jupyter notebook behind the reverse proxy at the path "/groot".

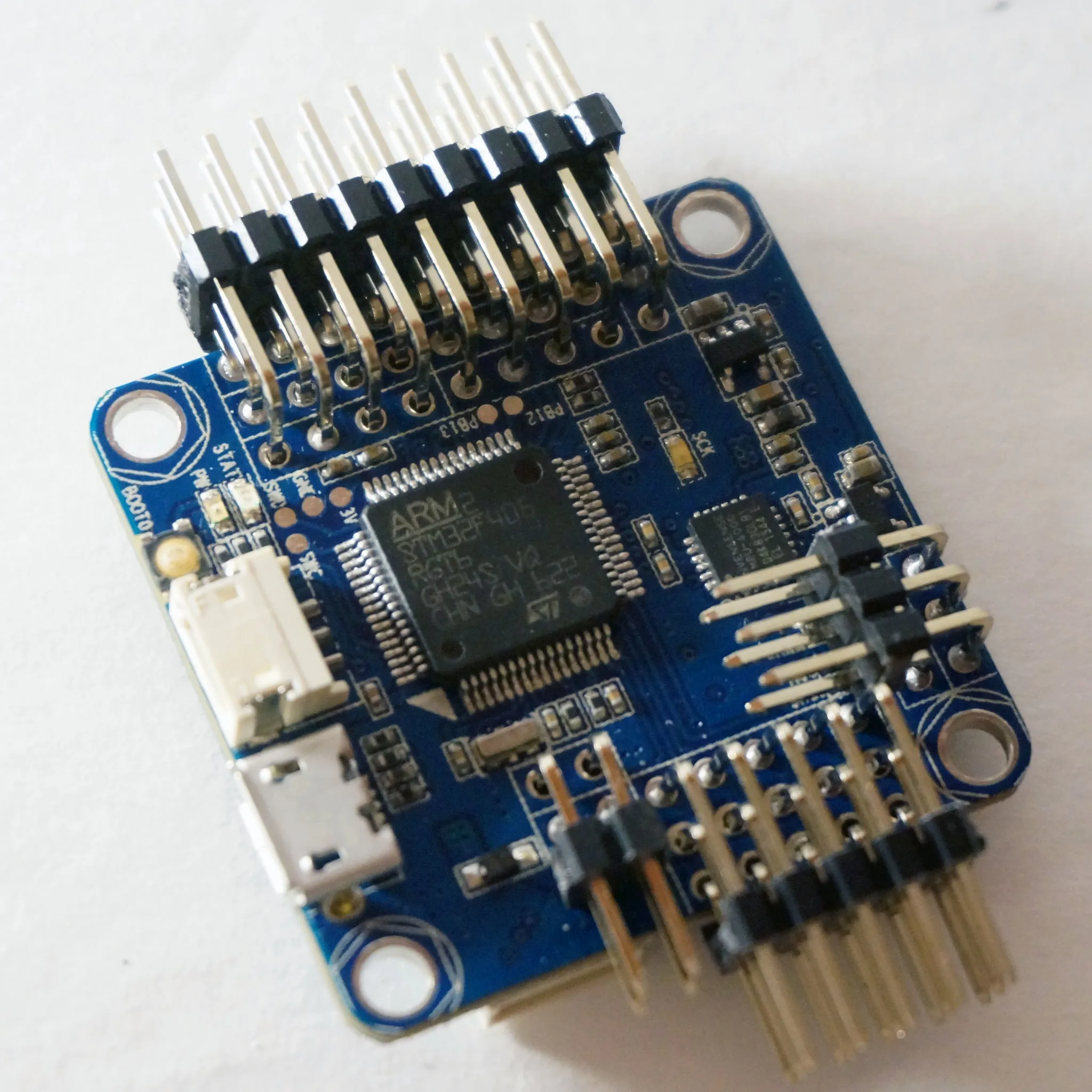

I'm starting to experiment with PX4 off-board control. This is a setup guide for running a Pixracer with Orange Pi on 16.04 with ROS Kinetic.

A while back I reviewed a bunch of box-style goggles. These single-screen goggles are generally more cost-effective, but bigger and less comfortable on the face. This time around I got a couple of dual-screen goggles, e.g., FatSharks, to try out and see if they're worth the money.

I was curious what the best low-light FPV camera for the money is, so I ordered a bunch of low-light FPV cameras and tried them out. This article details what I found.

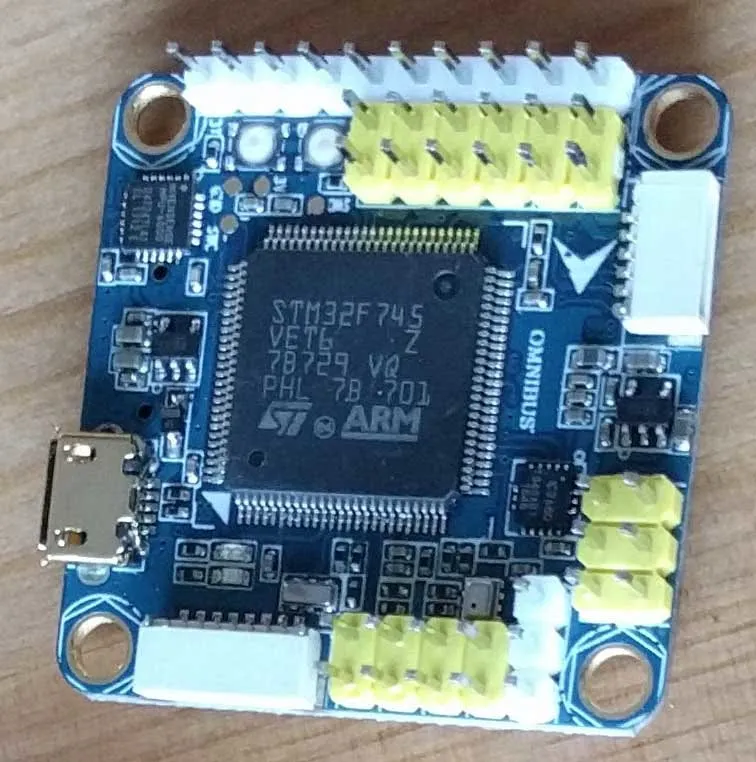

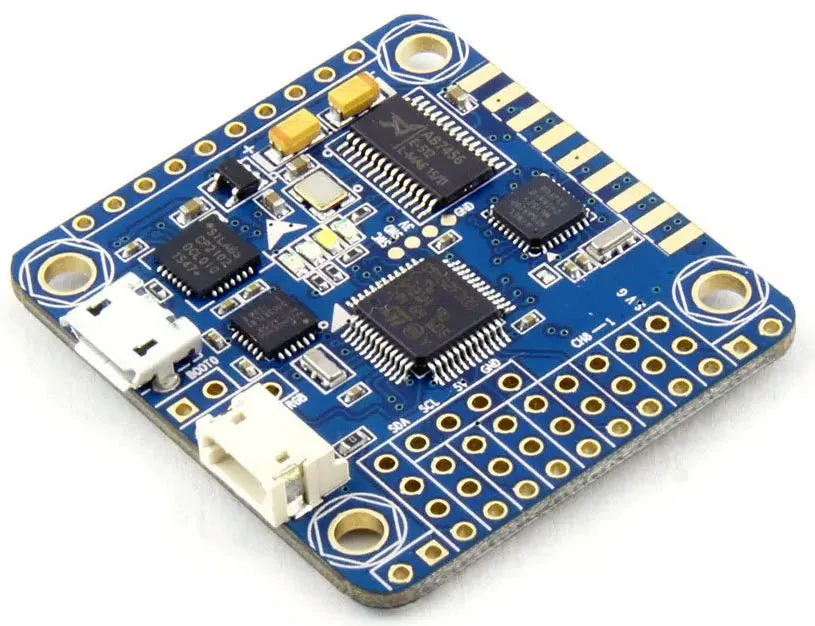

As of Jul 31, 2017, 2:26 PM PDT, Betaflight 3.2 is in the Release Candidate stage and with this release, F7 flight controllers have arrived. While an F7 MCU is not entirely necessary, not yet anyway, having a very fast and very capable MCU is future-proof. I expect some of the most exciting new performance features in Betaflight will target the F7 MCU. This article is an overview of the current F7 landscape.

The Asguard flight controller is an OmnibusF4 with an integrated 4-in-1 ESC. This is a build guide for an Asguard based quadcopter.

STM32CubeMX is a cool little code generation tool from STM that helps you choose the pinout for your microcontroller project given the pin assignment constraints of a given CPU. The only problem is that it doesn't allow you to generate a Makefile project for use with the arm-none-eabi- toolchain.

The Aurora 100 is a micro brushless quadcopter from Eachine, similar to the Falcon 120. It's no less powerful than the Falcon 120, however, it's lighter and therefore more nimble. This new class of very small, sub-250g drones is exciting as no FAA certification is required to fly them.

If you run into weird issues flashing your STM32 board, it might be because the board is locked.

The Taranis Q X7 is an awesome new radio from FrSky that is budget-priced at just over $100 and stocked full of premium features like a backlit screen, audio output, an SD card for tons and tons of models, and not to mention, every switch is 3-position.

The Falcon 120 is a micro brushless x-configuration quadcopter. This is a review and setup guide.

I recently made some improvements to my Geeetech Prusa i3 3D Printer. These quick and easy upgrades provide a massive improvement in build ease and quality.

RISC-V is the new hotness, and the SiFive FE310 is the first open-source RISC-V hardware SoC. This is a guide to start developing for this chip or FPGA.

The BLHeli Configurator by @diehertz is here! And so is the beta version of DSHOT for BLHeli_S. In this guide, I'll tell you a little bit about what I've learned from diehertz about BLHeli_S and how to flash your own ESCs.

In this article, I walk through the iterative design process of building my ultimate micro racer. I took a BeeRotor u130 and custom printed some parts (links below) to turn this into a bigger, faster TinyWhoop that I'm calling the UltraWhoop. Here it is next to its smaller counterpart, the eWhoop.

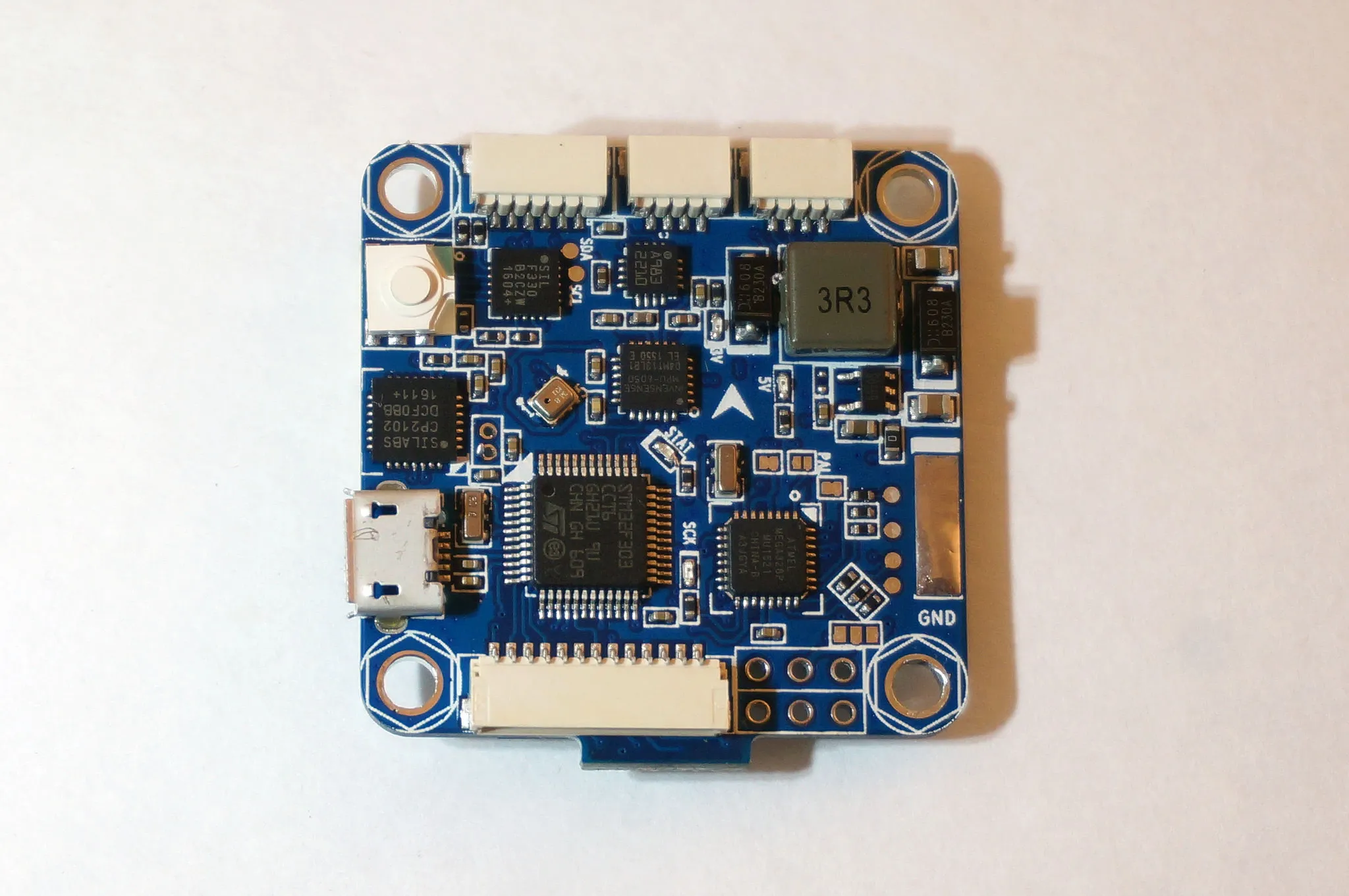

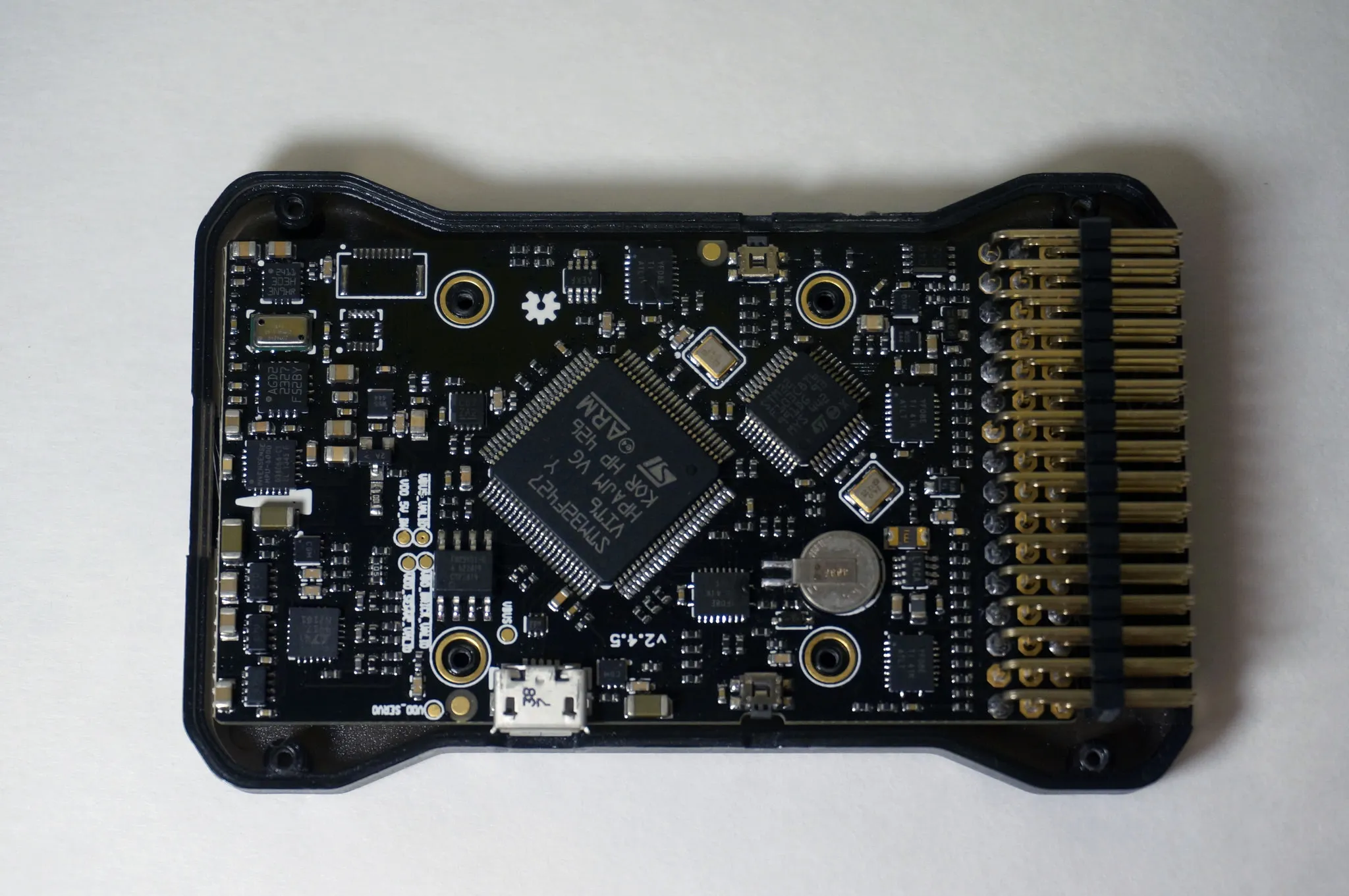

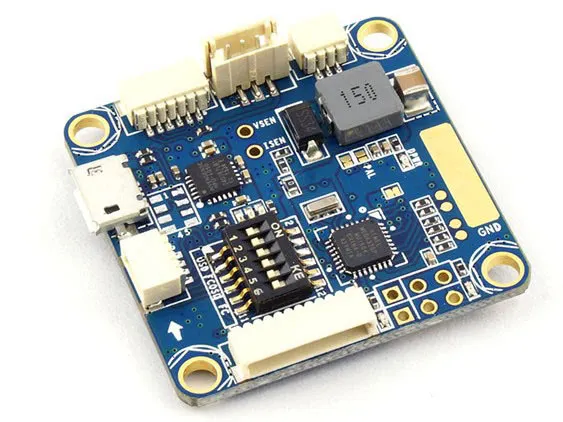

This is an overview of the Omnibus F4 flight controller and Betaflight OSD (BFOSD), which I developed and implemented.

This is a review and setup guide for the [Grasshopper F210 Quadcopter](http://goo.gl/qhVtlB).

This article is an intro to the world of brushed-motor micro quadcopters. I've tried out a few and share some thoughts below.

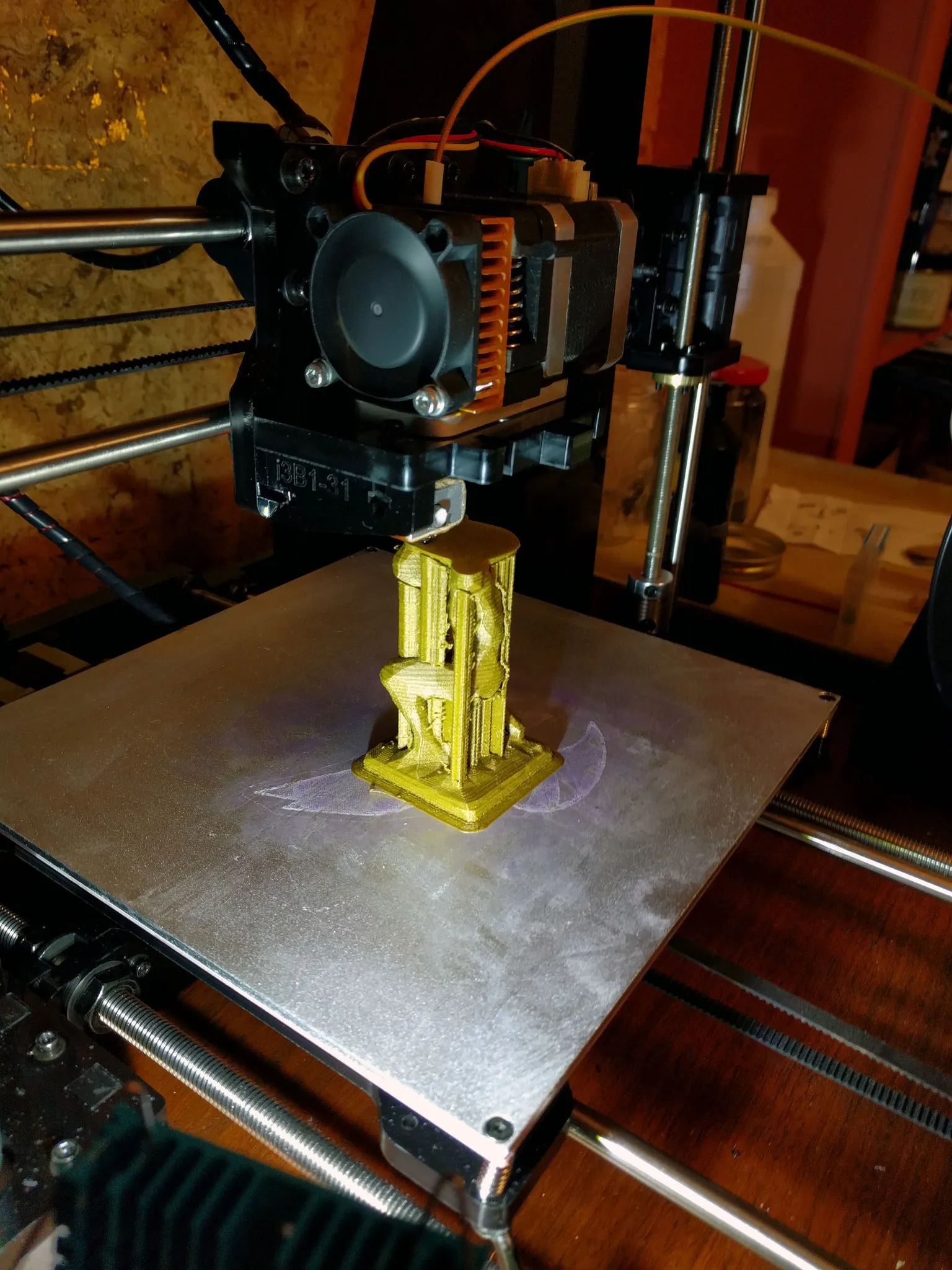

I just got my first 3D printer, the Geeetech Prusa i3 X. This post details my experience as a 3D printing newbie from unboxing, assembling, and using this 3D printer. First, let me show you what this printer can do.

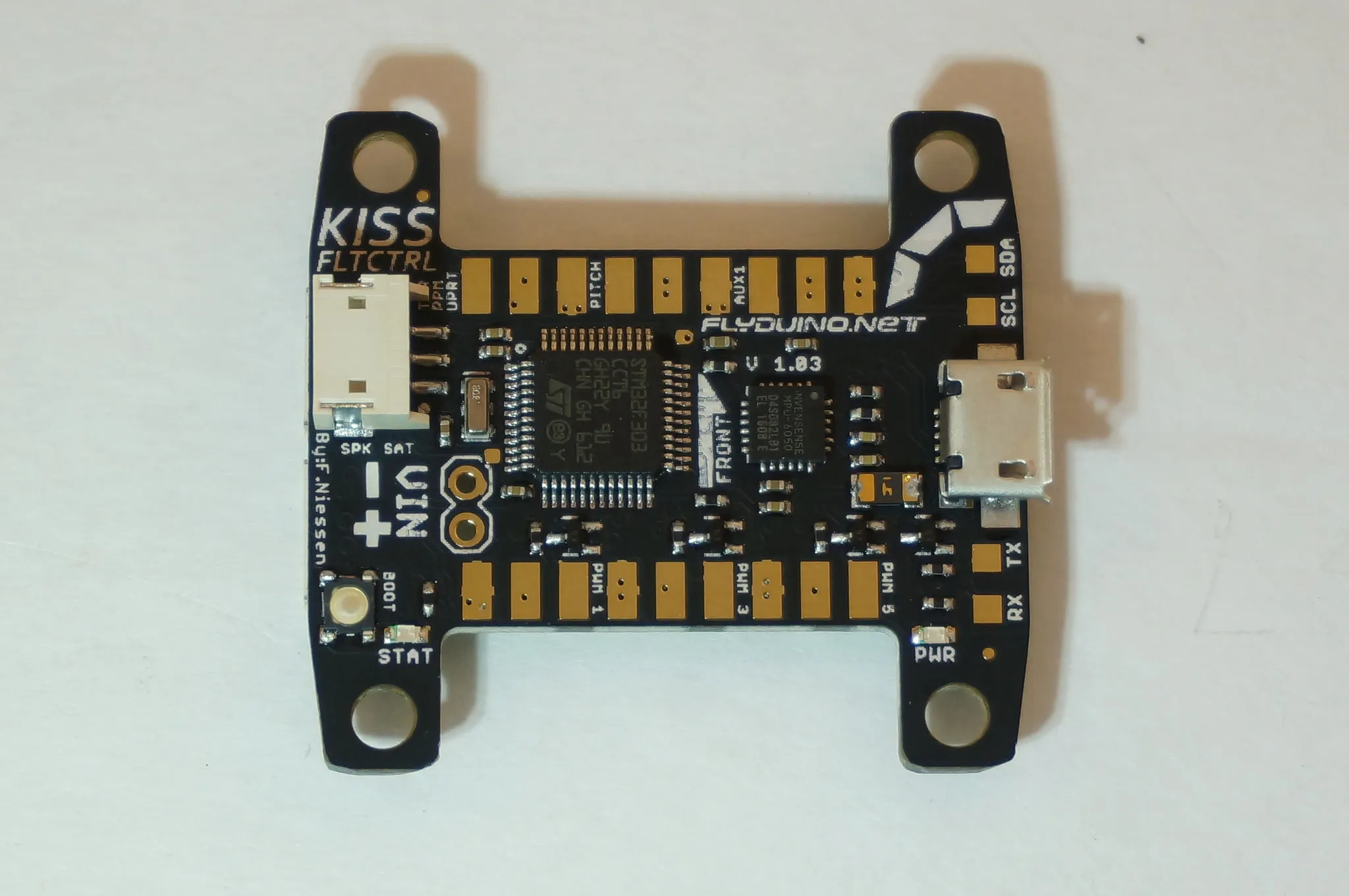

This guide will show you how to install Betaflight on your KISS FC.

This is a quick overview of the Airbot F3S AIO Flight Controller and Typhoon 4-in-1 Pro ESC.

This is my unboxing, review and hacking guide for the Flysky FS-i6 2.4ghz radio transmitter.

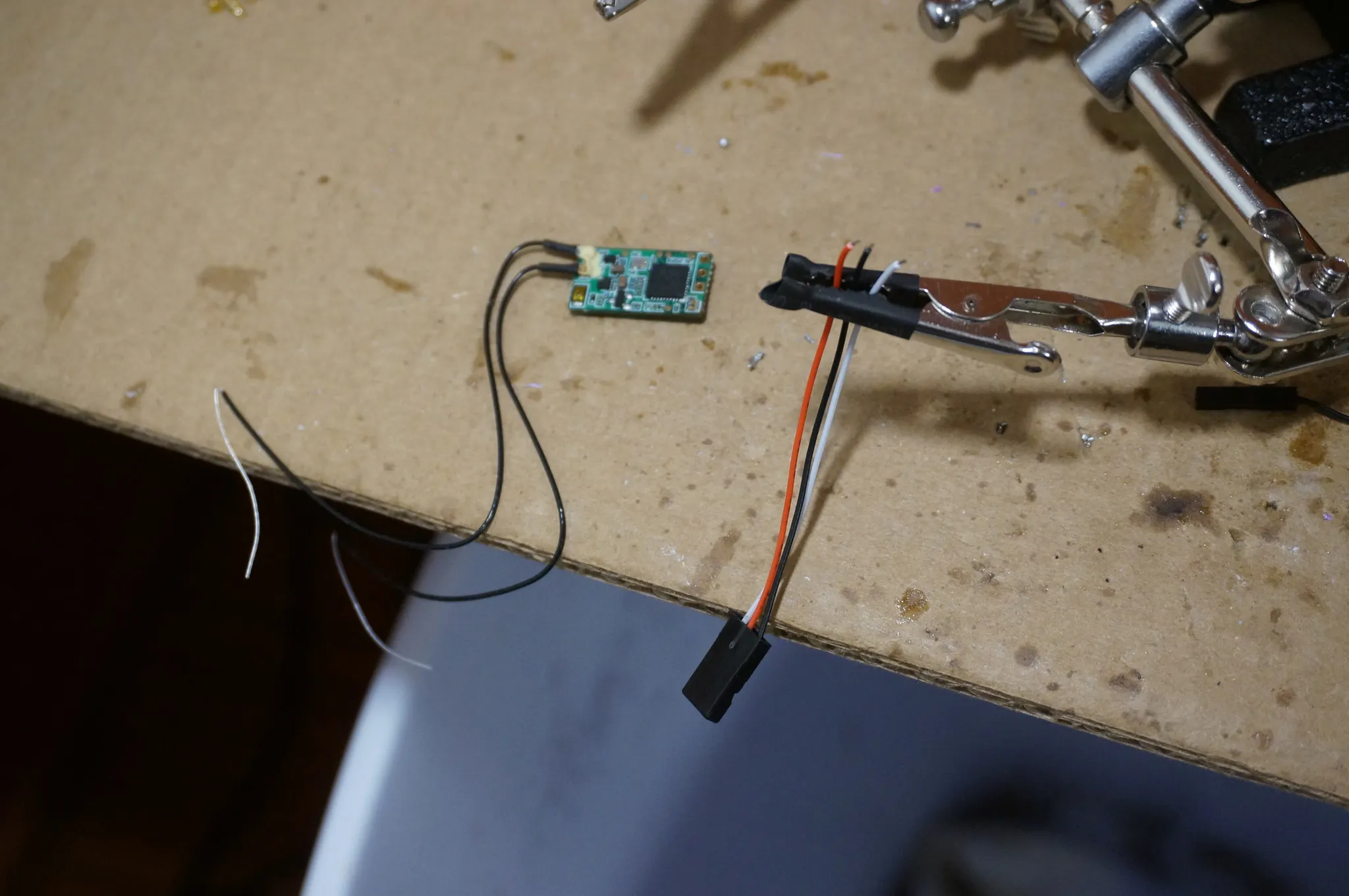

This guide will walk you through setup of a Spektrum RX Satellite. I'll show you how to bind it with your transmitter using betaflight version 3.1.6 and newer. I'll be using an OmnibusF4 in my example.

This is a review, build and setup guide for the Airbot Typhoon 180 Miniquad with the brand new OmnibusF3 flight controller that I designed in collaboration with Airbot.

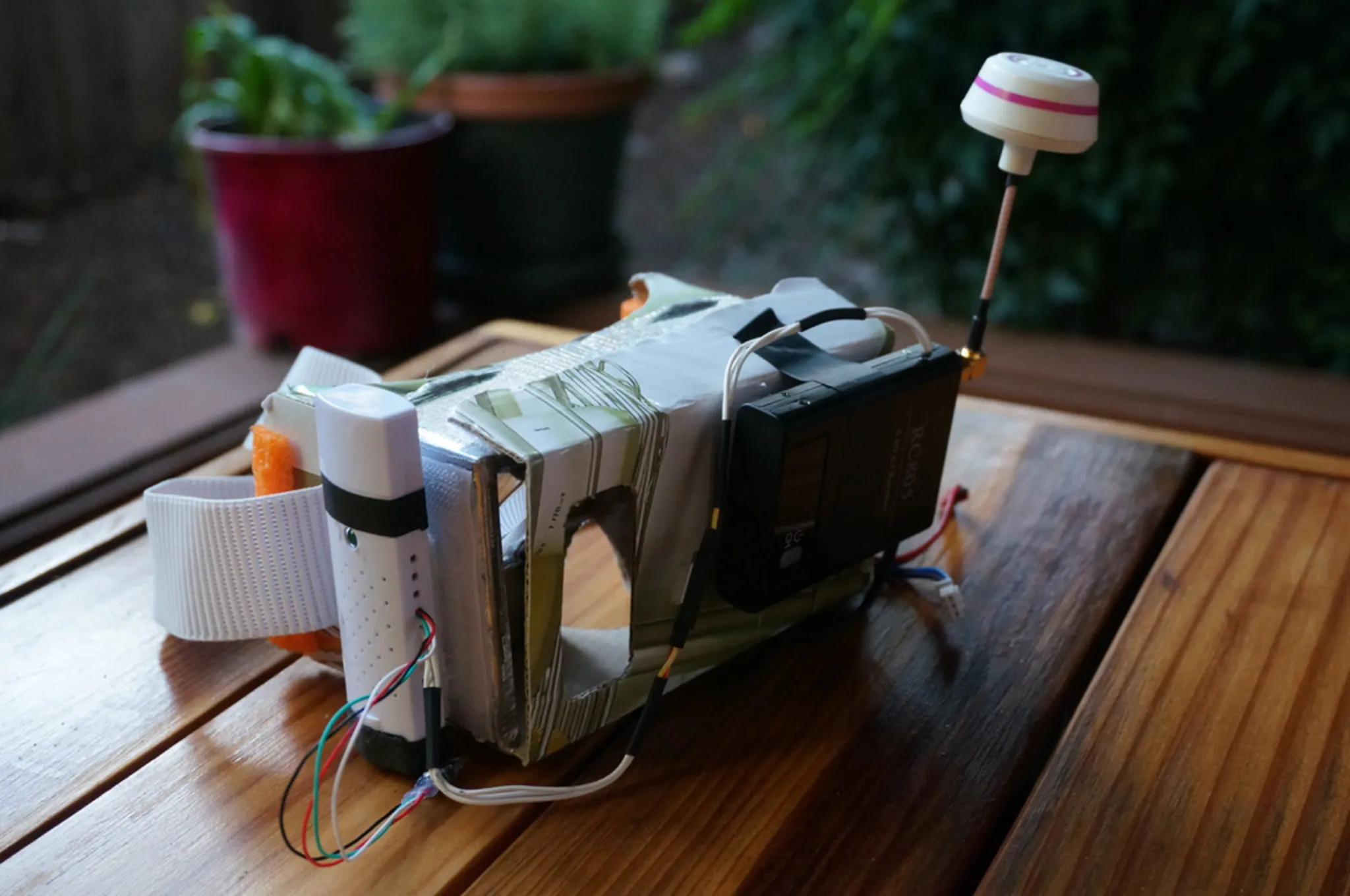

At Maker Faire Bay Area 2016, David, Jack, Huned and I built a drone with the help of some kids. This is the story of building that drone.

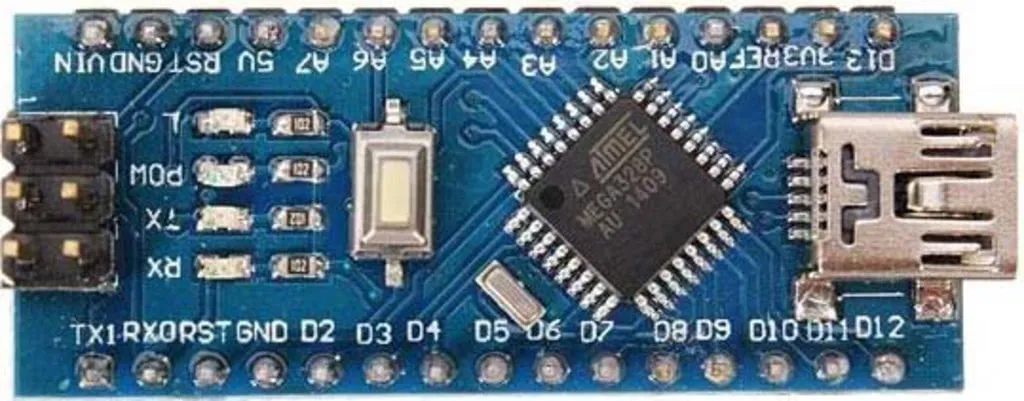

Programming a microcontroller is a bit different than programming on a PC. Error messages aren't nicely propagated to a terminal or GUI.

This is a build and setup guide for the BeeRotor u210.

This is a review and build guide for a mini drone based on the AirBot Flip32 F3 AIO Lite and Typhoon 4-in-1 ESC.

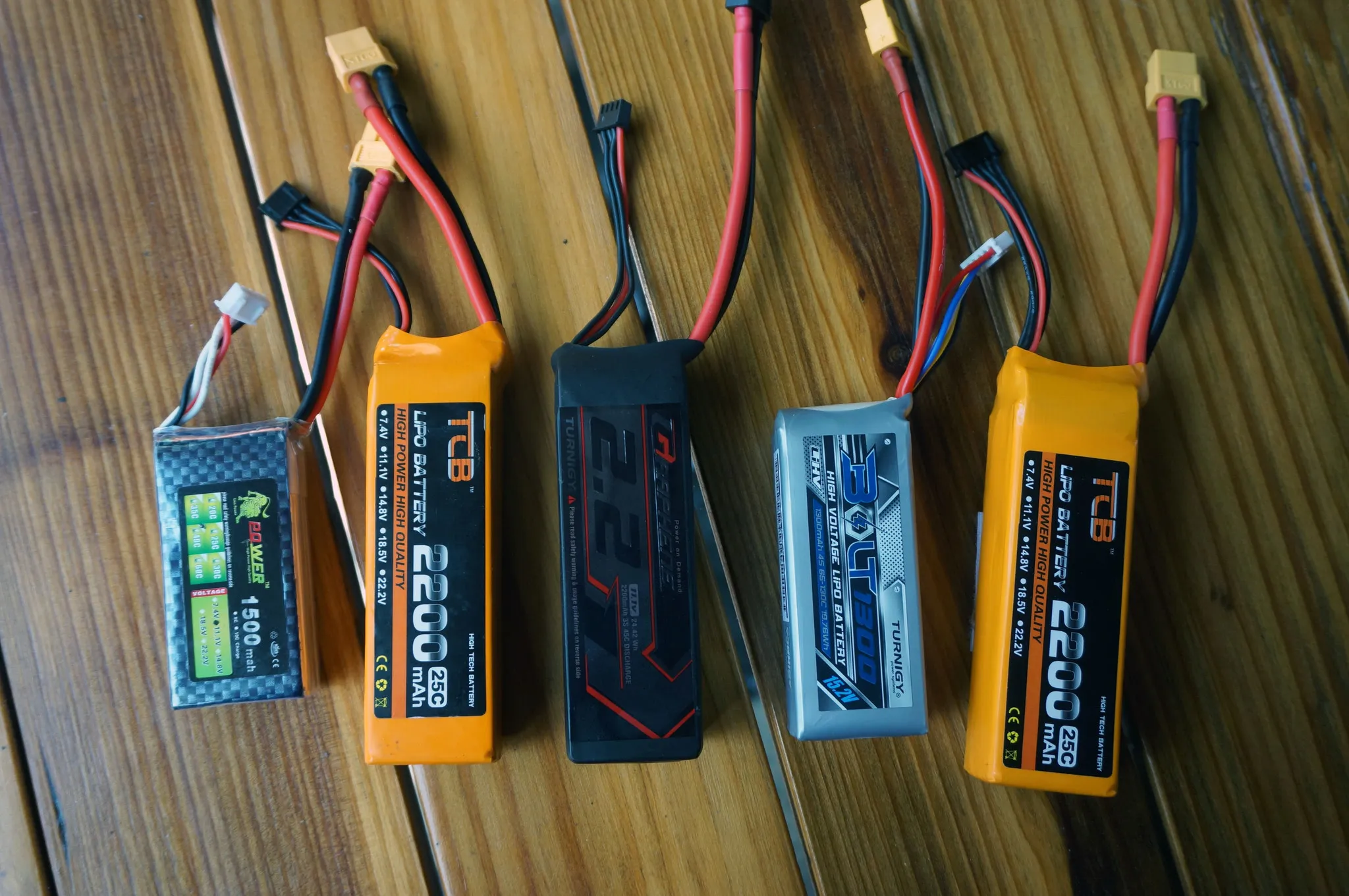

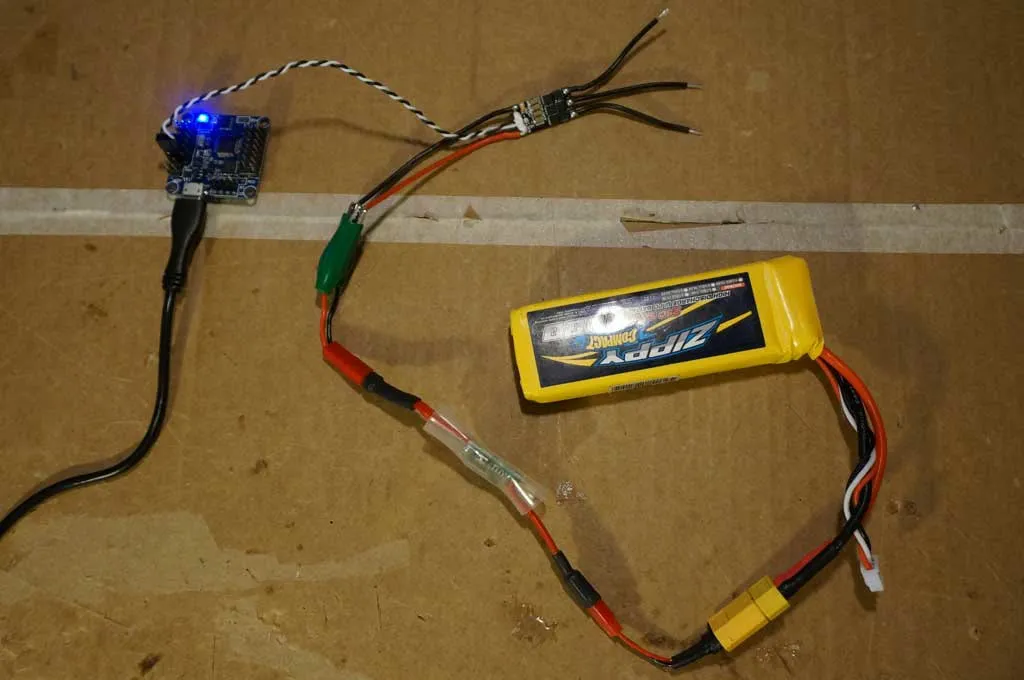

This is a performanc analysis of 3s 2200mah Graphene and 4s 1300mah Turnigy Bolt batteries.

If you live in the SF Bay Area, come to Make Faire on May 20-22, 2016 and build a drone with me! I'll be hosting an exhibit called "Drone Building 101" where I'll be helping folks build their own FPV mini-quad.

This is a review, build and setup guide for the Rctimer Beerotor X200.

This is a setup guide and review of the Rctimer Victor230 FPV mini-quad.

This is a review, setup guide and comparison of the two Airwolf DIY FrSKY Receivers (F801 and F802), both paired with the DJT Transmitter module in my 9x. This article also takes a look at the Naked X4R receiver from FrSky.

This is a review of the new HMDVR FPV Video Recorder. Note this also applies to the Eachine ProDVR, which has the same internals.

This is a review and setup guide for the new BeerotorF3 (BRF3) all-in-one (AIO) flight controller from Rctimer.

So, you want a flying camera to take videos of you and your awesome friends doing amazing things. You've thought about getting one of those pre-built drones by DJI or 3D Robotics, but those are expensive little drones that only carry weak little cameras. Building a giant rig to carry your full frame DSLR isn't ideal either, because it will need to be huge and therefore less portable. So what should we do? Build something to carry our NEX-5T micro 4/3rds camera! This camera weighs 397g with the standard lens and the video can be viewed and the camera controlled over Wi-Fi, ideal for when we're in the air.

In this review, build and setup guide we will walk through building and configuring the Q600 quadcopter from Rctimer.

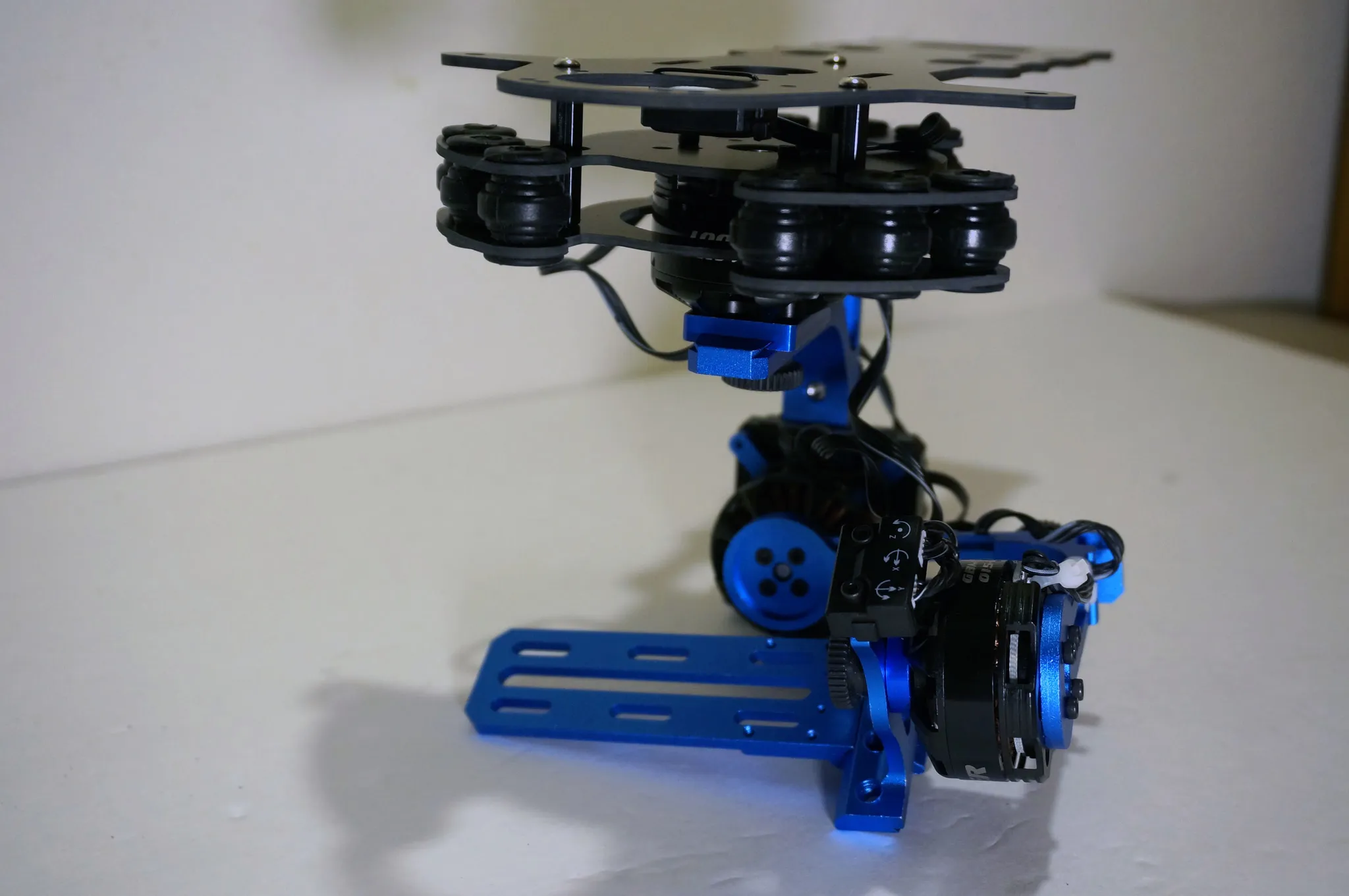

This is a detailed build and setup guide for the Rctimer ASP 3-Axis Brushless Gimbal.

This guide will show you how to install, flash and configure the PixHawk. I'll be using Rctimer's distribution of the PixHawk, which is called the FixHawk. From now on, I'll use these terms interchangeably. The FixHawk will be installed and configured in an Rctimer Q600 quad-copter, which I highly recommend if you're looking for an awesome frame, but these directions should apply to any quad-copter installation and configuration.

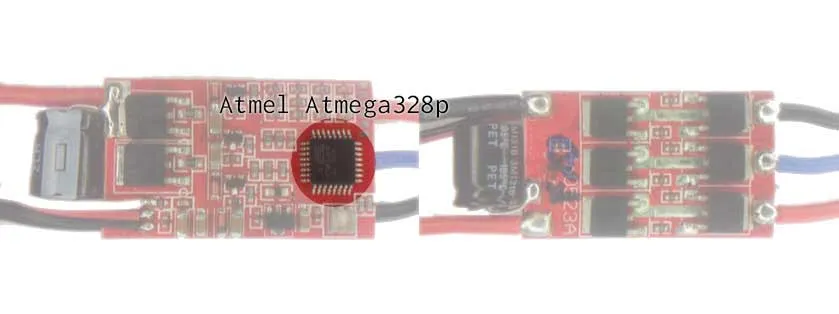

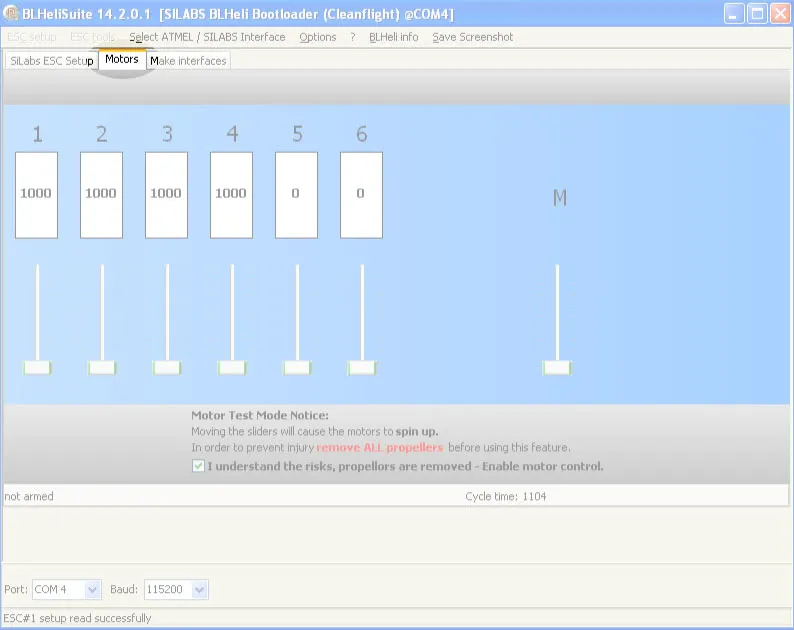

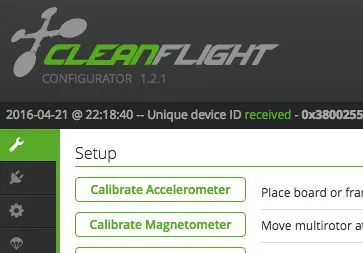

This guide will show you how to flash BlHeli and the BlHeli bootloader so they can be programed via CleanFlight or BetaFlight pass through programming in BlHeli Suite.

This guide will show you how to configure your ESCs with the optimal settings for use with OneShot125, CleanFlight or BetaFlight.

This quick guide will explain how to calibrate your ESCs so they throttle up evenly.

This is a review and build guide for the BeeRotor 160mm from Rctimer.

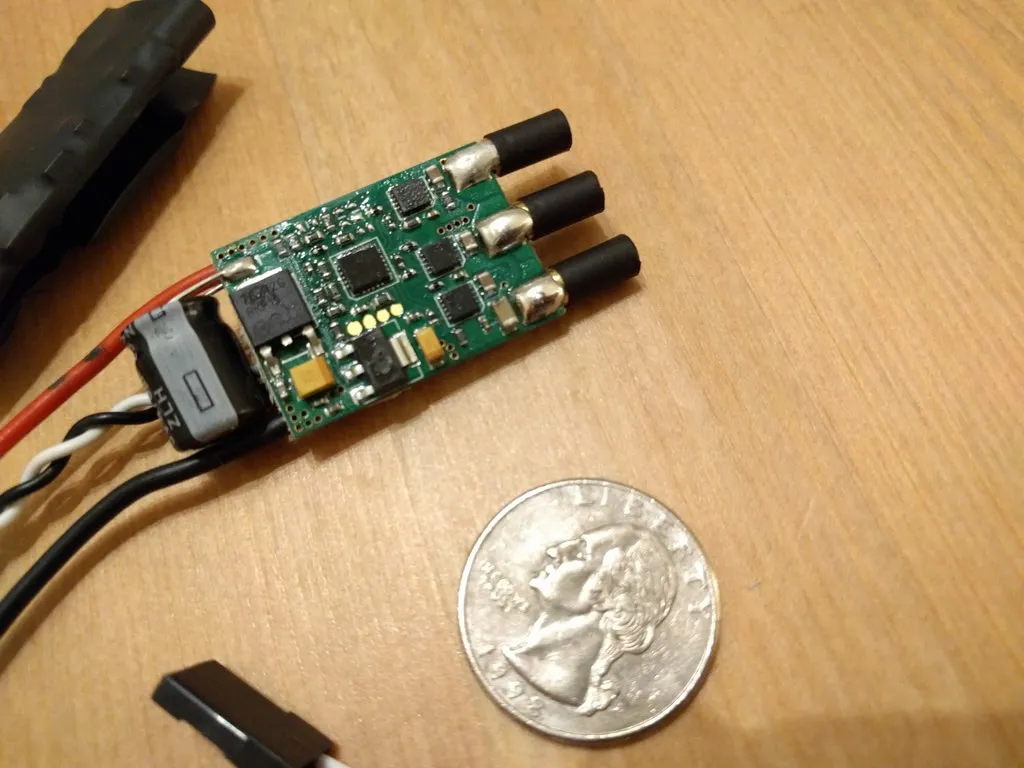

This guide describes how to flash the BlHeli and the BlHeli Bootloader to XRotor 20a ESCs so that they can be updated via CleanFlight and BetaFlight after installing them in your multi-rotor, without unplugging them from the flight controller.

This article is an in-depth review and setup guide for the Rctimer OZE32 aka RTFQ Flip32 AIO.

After I finish soldering a new copter or make a significant change, like updating the flight controller firmware, I always have to review the remaining setup steps to ensure a safe first flight.

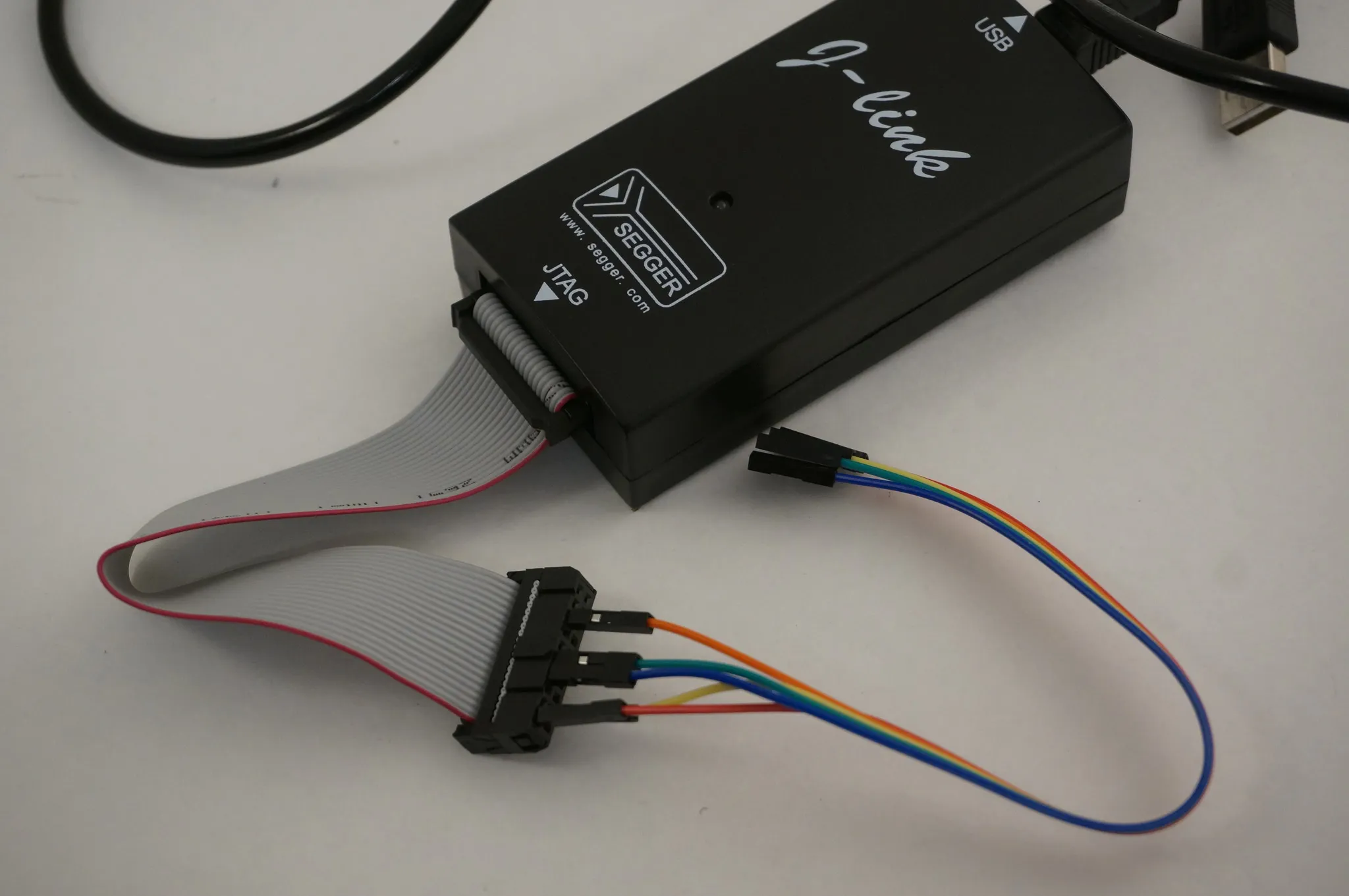

I was having trouble flashing BetaFlight to my SPRacingF3 board, so I used the SWD port to load the firmware. This guide covers that process.

The Arduino invalid id "0x000000" error could be caused by setting the wrong fuses, resulting in the oscillator not running. This guide will show you how to fix that issue.

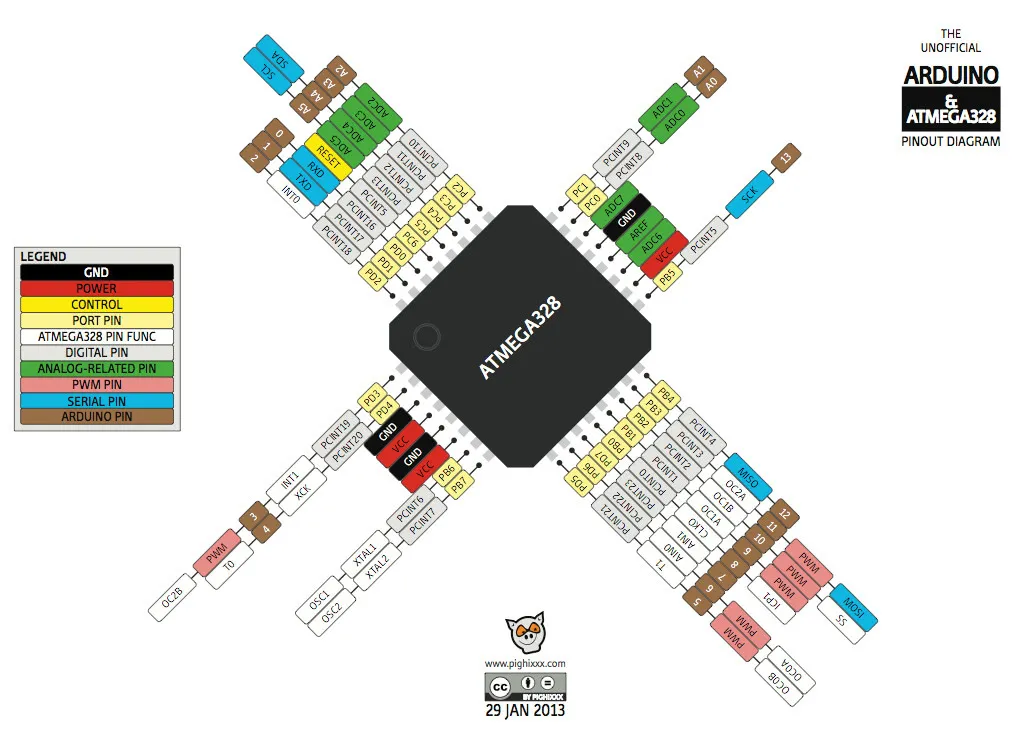

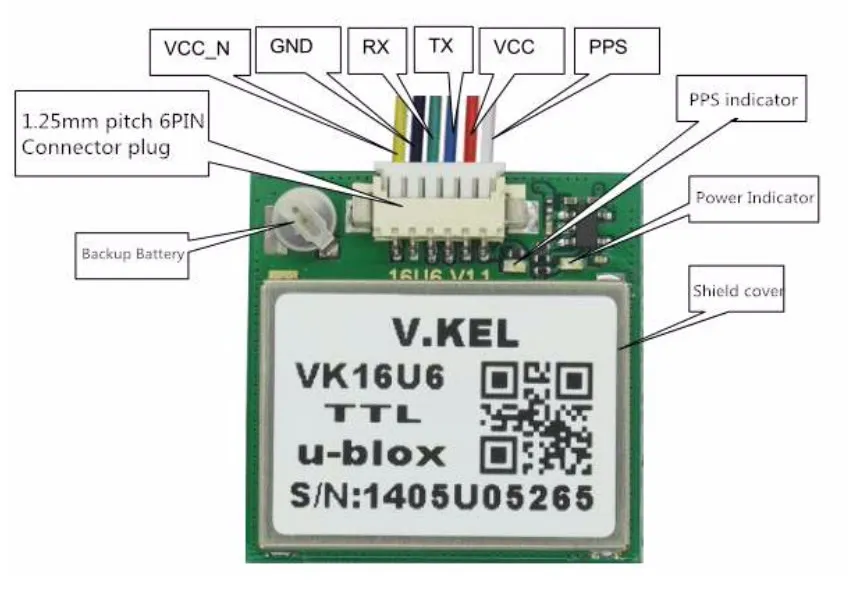

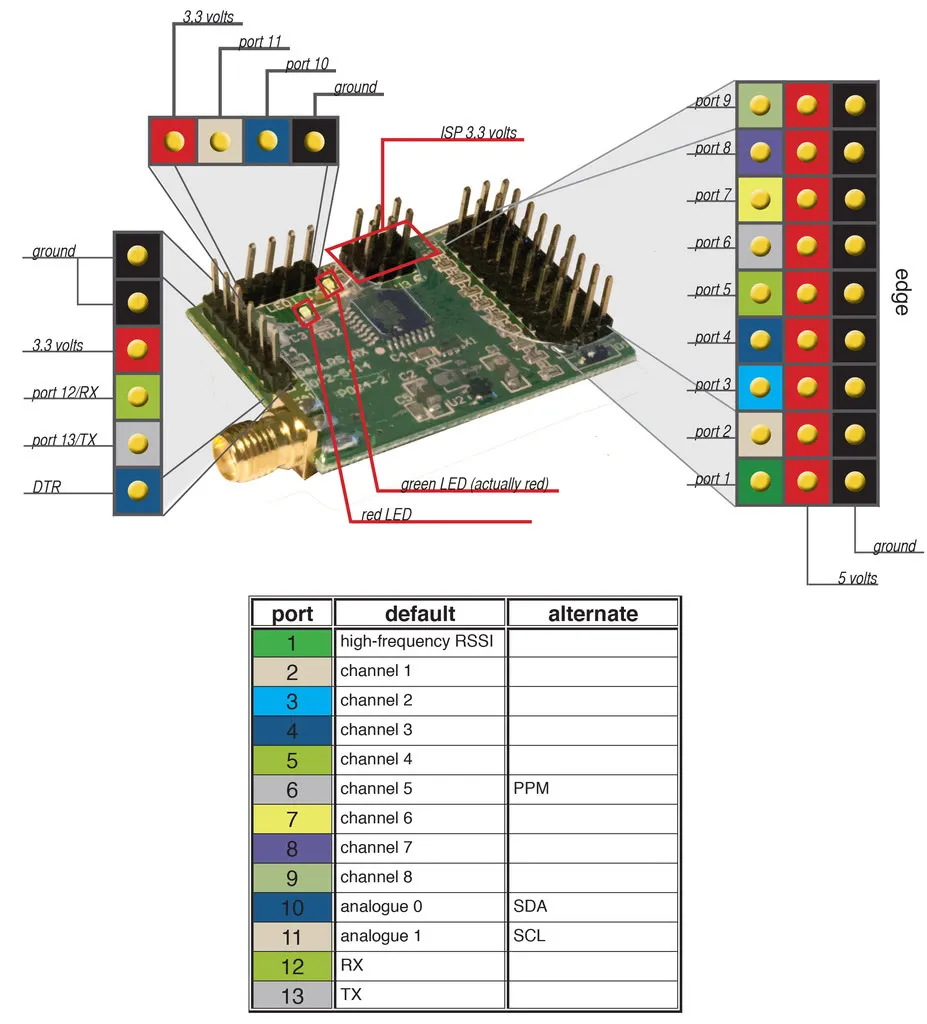

This is a quick guide on how to install the VK16U6 GPS on a CleanFlight or BetaFlight controller.

I've seen lots of skepticism about the latency of my Go FPV app, which allows folks to use their phone, instead of goggles, to fly FPV.

I recently migrated some of our data pipelines from our local Ambari manged cluster to Amazon Elastic Map Reduce to take advantage of the great cluster startup times, allowing scalable bootstrapping of clusters as necessary (and their subsequent termination).

This is a quick guide to building and flashing CleanFlight via ST-Link.

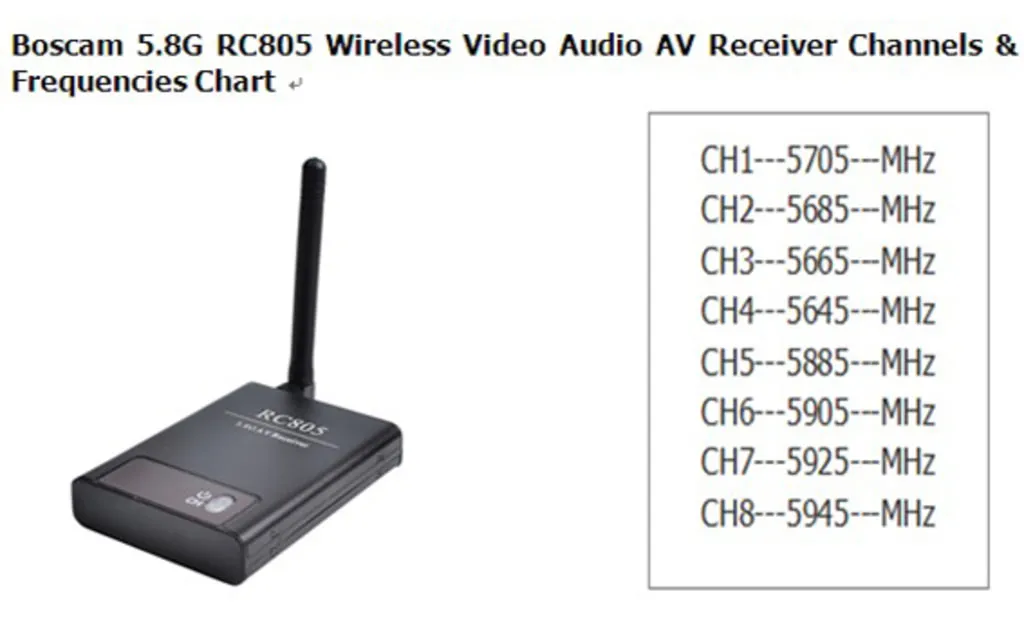

My notes on the various video channels implemented in several popular FPV transmitters and receivers.

This is a build guide for my DIY 3D "Go FPV" goggles. They have much higher resolution, depending on your phone, than Fatsharks and are waaaay cheaper as well. What is the resolution of these goggles you ask? Using my Nexus 5, which runs at 445 PPI 1080p IPS, that's 1920x1080 pixels. The screen is split between both eyes, so each eye gets 960x1080. Plus, if you have 2 cameras, they're capable of realtime 3D video.

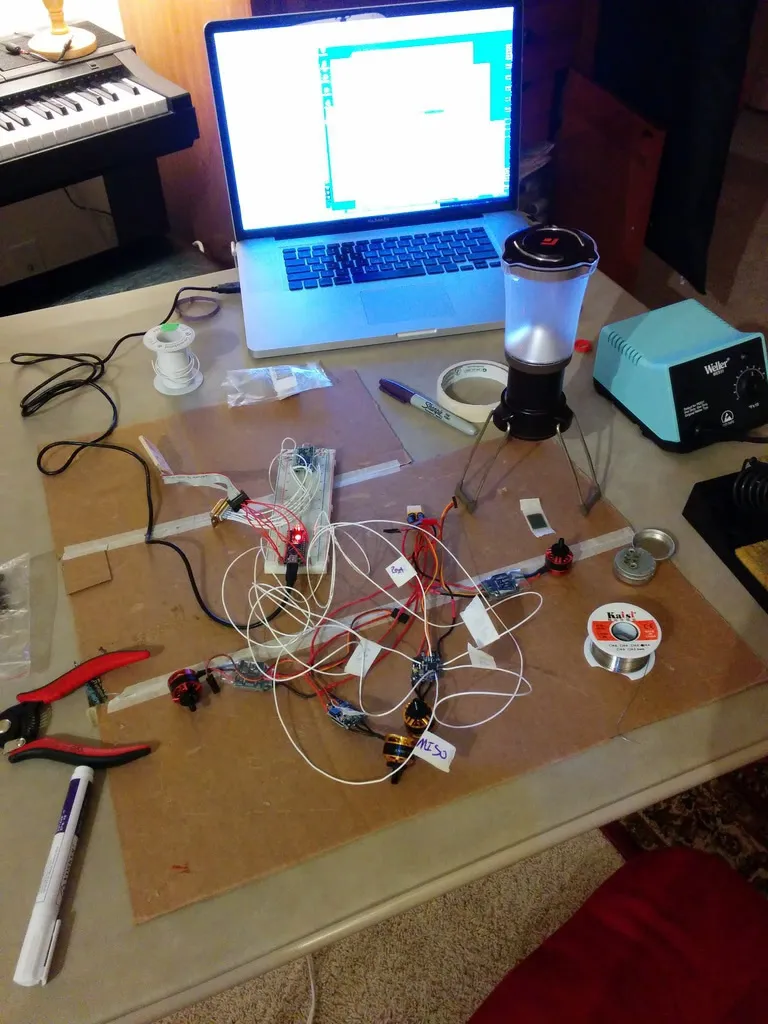

I've just got the last few bits of my first FPV250 working. Here's how to build your own FPV250 for under $200 (not including the camera). First a few photos of what you'll end up with at the end of this article.

This article is essentially the same as my article on flashing HobbyKing's F20 ESCs, so check that article out for the walk through. I even used the same programmer setup, leaving my original intact by adding the leads to the breadboard.

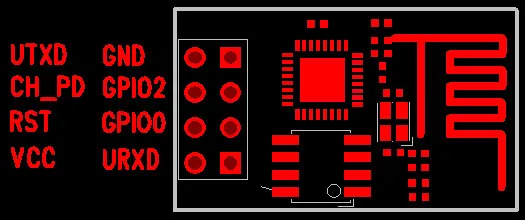

This is a quick guide on flashing an ESP8266 module via NodeMCU.

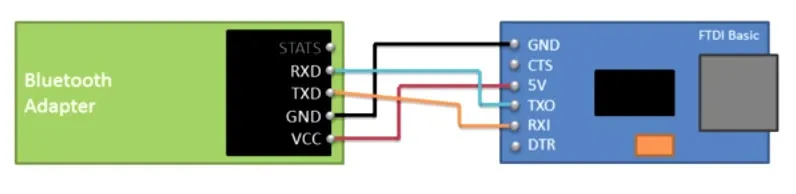

This is a quick guide on setting up the HC-05 and HC-06 bluetooth modules commonly available on ebay.

Putting together the flight controller for my first mini quad I decided on setting up a full-featured OSD.

This guide will walk you through installing BlHeli and the BlHeli bootloader on HobbyKing F20 ESCs.

Importing dates with pig into hive from a csv can be tricky. Depending on the format, the default datetime pig parser likely won't work.

When trying to decide what size batteries to get for my new mini-quad, I did some pricing analysis, starting with hobbyking.com. Since ordering from hobbyking is always a bit of a gamble, I hope to add a few more suppliers soon and get a relative view of pricing across the landscape. I am curious about the actual pack composition. If anyone knows what manufacturers (aka, factories) make these, shoot me an email. I'd love to talk to them directly and find out if there is any real difference between brands and suppliers.

Enable ADB over WiFi to debug applications that require a USB connection to a different device.

I recently had a windows backup in vhd format stored on an EXT3 drive, only readable by my Ubuntu VM. Don't ask me how this happened, but I need to pull a few files off the backup.

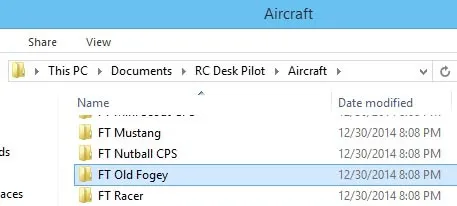

This guide will show you how to configure your Hobby King 2.4Ghz 6Ch Tx & Rx V2 transmitter as the input to your flight simulator. I'm using the excellent and free RC Desk Pilot with the FT Old Fogey model to practice flying my Smash Drone.

I built a DIY RC plane to learn more about flight and embedded electronics.

This article is better titled, "how to impersonate a MAC" or "how to spoof a MAC on a Mac." So you're at a hotel and want to use the DropCam you brought along to keep an eye on the baby while you kick it in the next room. Only issue is that the hotel makes you enter your room number and a password they've given you, in a captive portal. What do you do?

This is quick guide on updating the PostgreSQL 9.3 defaults to improve performance.

You've copied and pasted into an html text editor only to find weird things going on in the browser. Lines aren't wrapping correctly. It seems like its just all one long word, but you know there are spaces. Looking further into the issue you find that non-breaking spaces are everywhere. How do you fix it?

An overview of the scriptable, open source, nginx-based reverse proxy -- OpenResty.

How to use imagemagick to bulk-convert Cannon Raw Format to a jpeg or png.

Capture the Firefox close event in a Firefox Plugin.